KAUST/ARAMCO Master’s in

Data Science & Analytics Statistical Modeling of Data

Paula Moraga, Ph.D.

Prof. Statistics, KAUST

paula.moraga@kaust.edu.sa

Statistical Modeling of Data

Instructors

- Professor Paula Moraga (Modules 1 - 4)

- Professor Hernando Ombao (Modules 5 - 8)

Teaching Assistants

- Ms. Mara Talento (Modules 1 - 4)

- Ms. Farah Gomawi (Modules 5 - 8)

Course description

This course covers several topics essential to conducting data analysis.

The goals are to train students

- to conduct exploratory data analysis through visualization and summary statistics

- to obtain confidence intervals and conduct tests of hypotheses

- to build statistical general models, estimate parameters in these models, and model associations between outcome and explanatory variables

- to conduct inference and forecasting in linear regression, logistic regression, categorical data analysis, and introductory time series and spatial analysis

Course materials

https://www.paulamoraga.com/course-aramco

Course schedule

Thursday May 7: Statistical inference foundations

| 9:00 am - 10:00 am | Probability distributions and descriptive analysis |

| 10:00 am - 10:45 am | Statistical inference and the Central Limit Theorem |

| 10:45 am - 11:00 am | Coffee break |

| 11:00 am - 12:00 pm | Confidence intervals |

| 12:00 pm - 1:00 pm | Lunch break |

| 1:00 pm - 2:30 pm | Hypothesis testing |

| 2:30 pm - 2:45 pm | Coffee break |

| 2:45 pm - 4:00 pm | Practical |

Friday May 8: ANOVA, linear regression, and categorical variables

| 9:00 am - 10:00 am | ANOVA |

| 10:00 am - 10:45 am | Simple and multiple linear regression |

| 10:45 am - 11:00 am | Coffee break |

| 11:00 am - 12:00 pm | Practical |

| 12:00 pm - 1:00 pm | Lunch break |

| 1:00 pm - 2:30 pm | Categorical variables |

| 2:30 pm - 2:45 pm | Coffee break |

| 2:45 pm - 4:00 pm | Practical |

Saturday 9: Interactions, assumptions, and unusual observations

| 9:00 am - 10:00 am | Interactions |

| 10:00 am - 10:45 am | Practical |

| 10:45 am - 11:00 am | Coffee break |

| 11:00 am - 12:00 pm | Model assumptions |

| 12:00 pm - 1:00 pm | Lunch break |

| 1:00 pm - 2:30 pm | Unusual observations |

| 2:30 pm - 2:45 pm | Coffee break |

| 2:45 pm - 4:00 pm | Practical |

Sunday 10: Model selection, GLMs, and spatial analysis

| 9:00 am - 10:00 am | Model selection |

| 10:00 am - 10:45 am | Practical |

| 10:45 am - 11:00 am | Coffee break |

| 11:00 am - 12:00 pm | Introduction to Logistic and Poisson regression |

| 12:00 pm - 1:00 pm | Lunch break |

| 1:00 pm - 2:30 pm | Spatial analysis |

| 2:30 pm - 2:45 pm | Coffee break |

| 2:45 pm - 3:30 pm | Practical |

| 3:30 pm - 4:00 pm | Wrap-up |

Evaluation

There will be two papers required for this course. Paper 1 will be on data analysis for the first half of the course, Paper 2 will be on the second half of the course. For each of the projects, students will analyze a dataset using the statistical techniques learned during the course. Students will need to submit a draft and a final written report, together with reproducible code.

Students will need to submit their work via Blackboard by the due date.

May 17 - deadline for Paper 1 draft (optional)

May 31 - deadline for Paper 1 FINAL

June 18 - deadline for Paper 2 draft

June 28 - deadline for Paper 2 FINAL

Grading

Paper 1: 10% draft + 40% final (If draft is not submitted, 50% final)

Paper 2: 10% draft + 40% final

Evaluation

Both papers 1 and 2 will include a title, abstract, introduction, data, methods, results and interpretation, a brief discussion section, and references. Make sure you accurately interpret your results, tables and figures in the context of the study. Tables and figures captions need to be self-contained.

For the reports, please use a maximum of 10 pages with 12 point font, one column, and 2cm margins, including tables and figures. Reports that exceed the specified number of pages will not be assessed. You will be judged on the quality and not on the quantity of material you submit.

You will need to submit via Blackboard a zip file containing the following:

- written report

- reproducible code and data. The code should load and analyze the data, work without errors, and include comments

Questions?

Probability distributions

Random variables

A random process is a random phenomenon or experiment that can have a range of outcomes. For example, tossing a coin, rolling a dice, or measuring the height of a randomly selected individual.

A random variable is a variable whose possible values are numbers associated to the outcomes of a random process.

Random variables are usually denoted with capital letters such as \(X\) or \(Y\).

Example

Random process: tossing a coin

Possible outcomes: heads and tails

Random variable: \(X\)

\(X =\) outcome when tossing a coin

\(X=1\) if heads and \(X = 0\) if tails

There are two types of random variables: discrete and continuous

A discrete random variable is one which may take only a finite or countable infinite set of values

\(X =\) outcome when tossing a coin (\(X=1\) if heads and \(X = 0\) if tails)

\(X =\) number of members in a family randomly selected in USA (e.g., 1, 2, 3)

\(X =\) number of patients admitted in a hospital in a given day

\(X =\) number of defective light bulbs in a box of 20

\(X =\) year student randomly selected in the University was born (1998, 2000)

A continuous random variable is one which takes an infinite non-countable number of possible values

\(X\) = height student randomly selected in the department (1.65 m, 1.789 m)

\(X\) = time required to run 1 Km (e.g., 4.56 min, 5.123 min)

\(X\) = amount of sugar in an orange (e.g., 9.21 g, 12.2 g)

Probability distribution

A probability distribution of a random variable \(X\) is the specification of all the possible values of \(X\) and the probability associated with those values.

Probability mass function

If \(X\) is discrete, the probability distribution is called probability mass function

| Value of \(X\) | \(P(X = x_i)\) |

|---|---|

| \(x_1\) | \(p_1\) |

| \(x_2\) | \(p_2\) |

| … | |

| \(x_n\) | \(p_n\) |

The probability mass function must satisfy

\(0 < p_i < 1\) for all \(i\), and \(\sum_{i=1}^n p_i = p_1 + p_2 + \ldots + p_n = 1\)

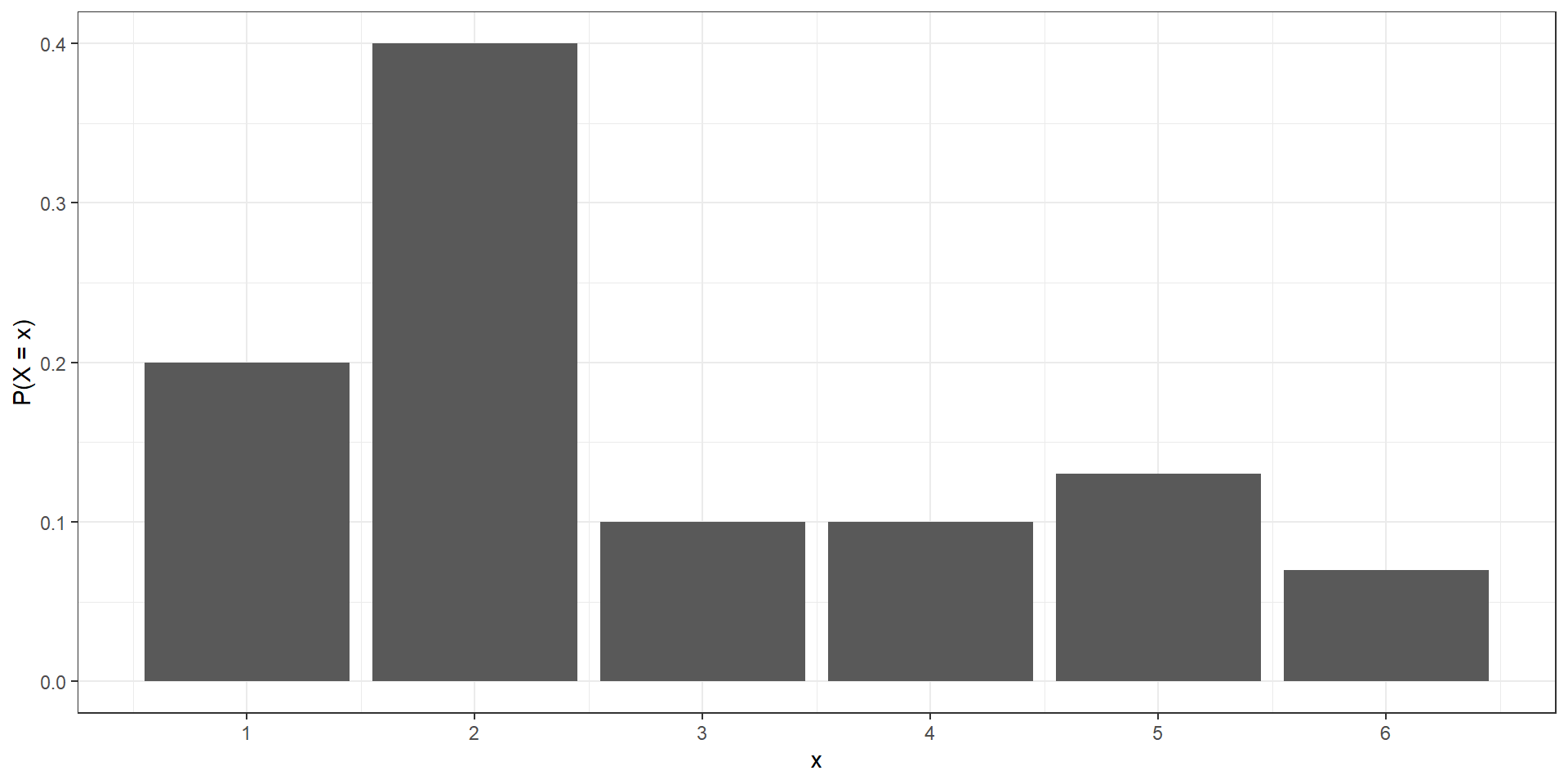

Example

\(X\) discrete random variable with the following probability mass function:

| - | ||||||

|---|---|---|---|---|---|---|

| Outcome | 1 | 2 | 3 | 4 | 5 | 6 |

| Probability | 0.20 | 0.40 | 0.10 | 0.10 | 0.13 | 0.07 |

- Check the validity of the probability mass function

- Calculate the probability that \(X\) is equal to 2 or 3

- Calculate the probability that \(X\) is greater than 1

| - | ||||||

|---|---|---|---|---|---|---|

| Outcome | 1 | 2 | 3 | 4 | 5 | 6 |

| Probability | 0.20 | 0.40 | 0.10 | 0.10 | 0.13 | 0.07 |

- Check the validity of the probability mass function

Probabilities between 0 and 1 and sum to 1

- Calculate the probability that \(X\) is equal to 2 or 3

P(X = 2 or X = 3) = P(X = 2) + P(X = 3) = 0.4 + 0.1 = 0.5

- Calculate the probability that \(X\) is greater than 1

P(X > 1) = 1 - P(X = 1) = 1 - 0.2 = 0.8

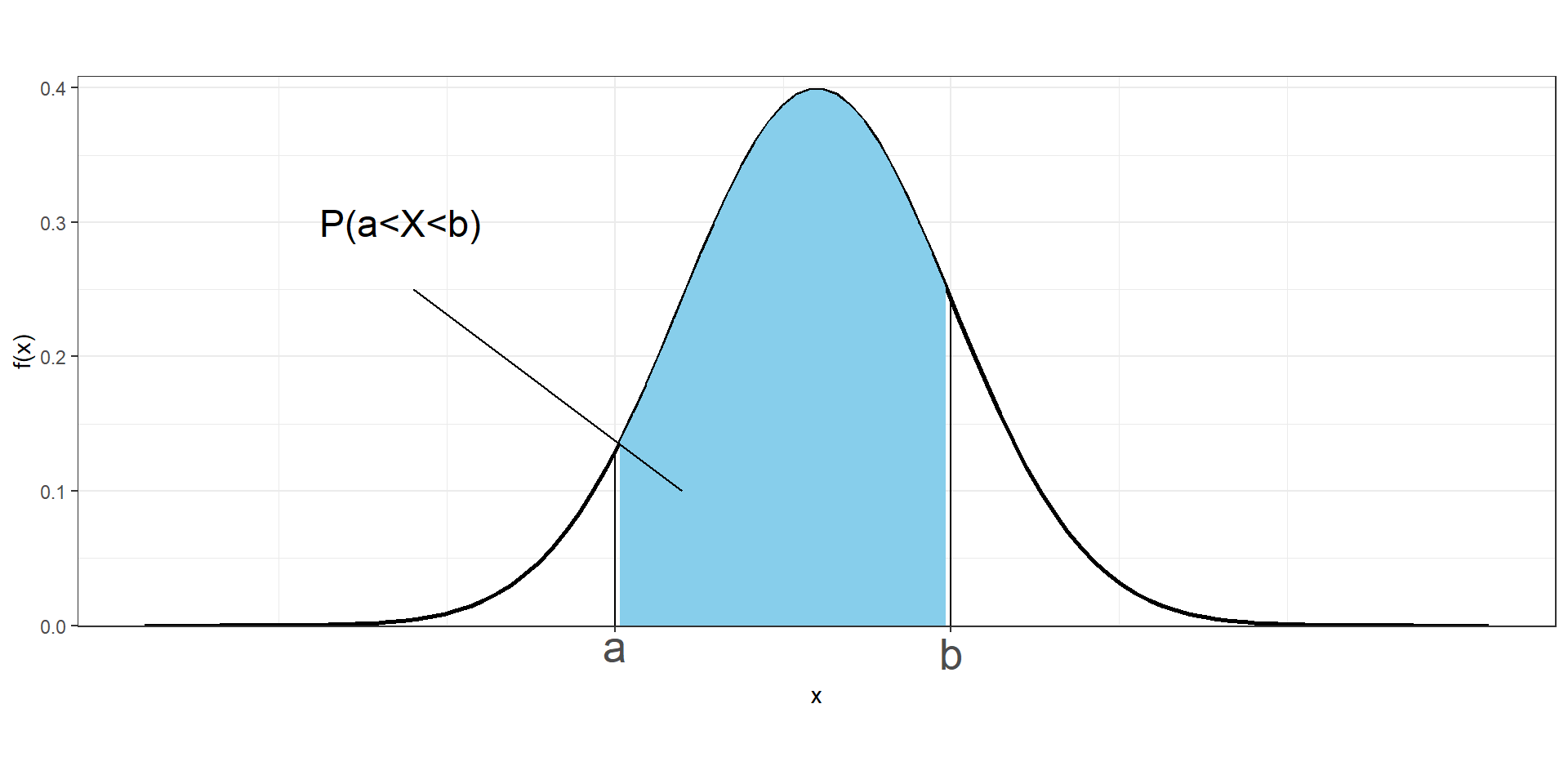

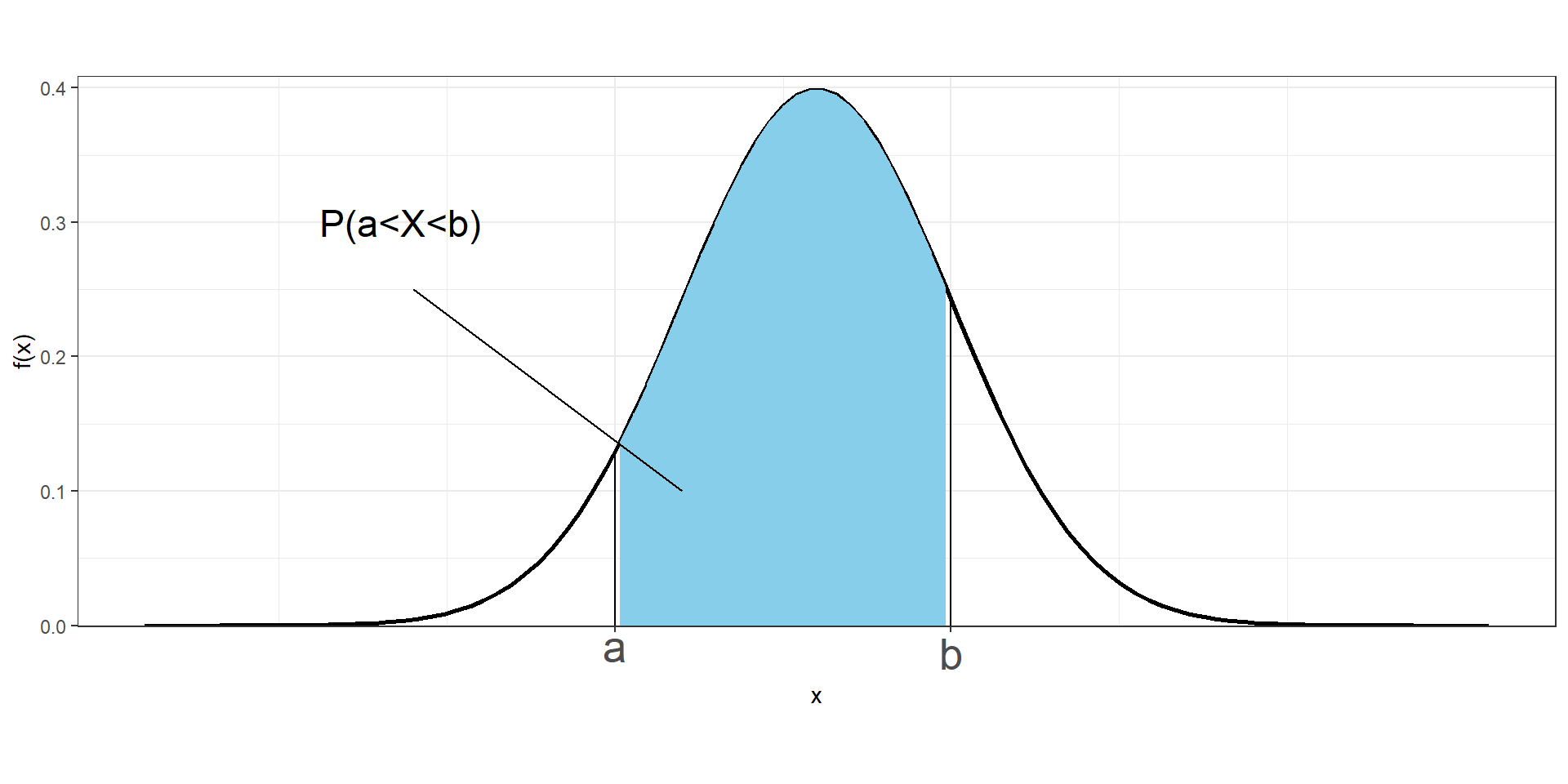

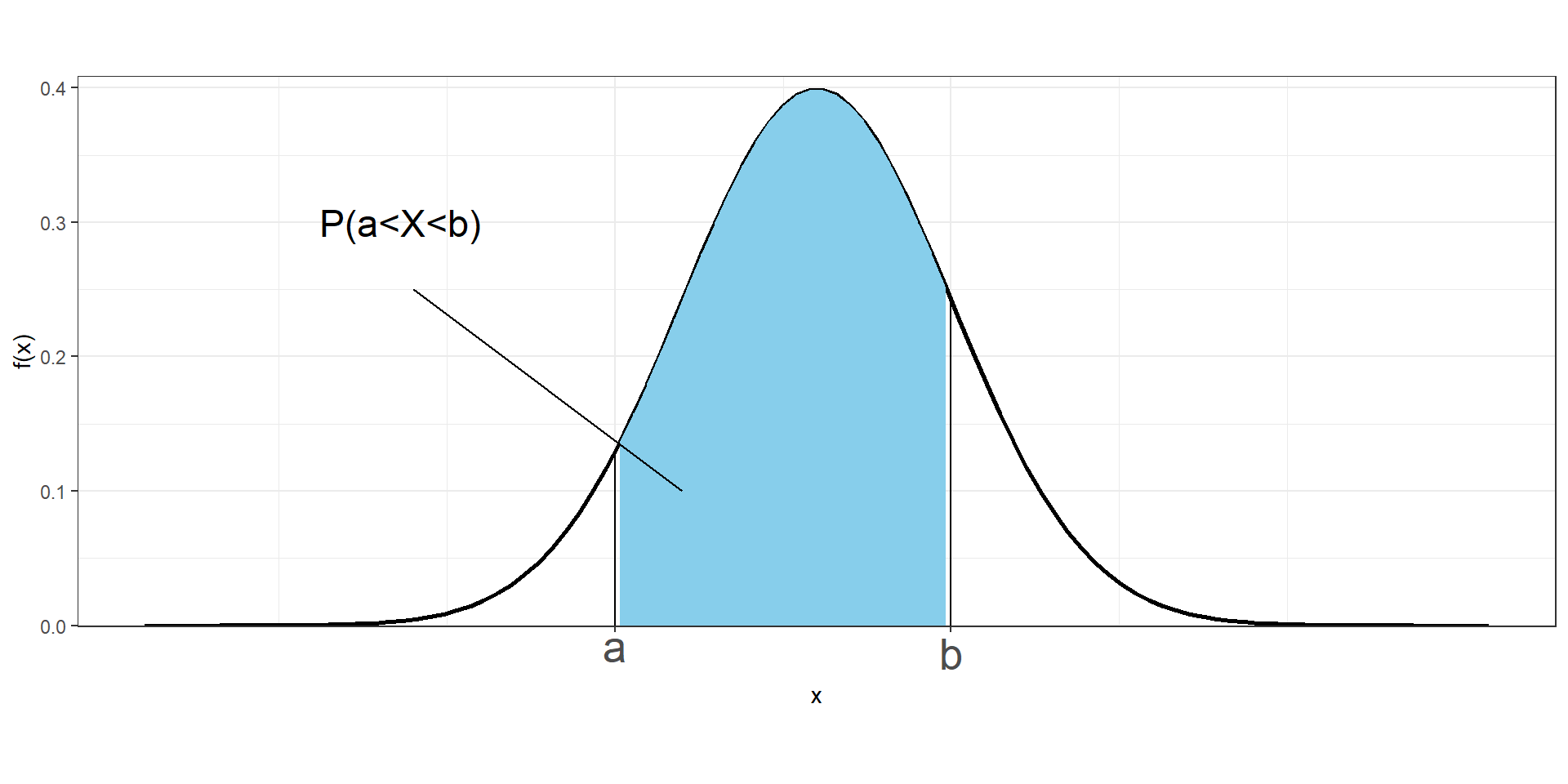

Probability density function

If \(X\) is continuous, the probability distribution is called probability density function. A continuous variable \(X\) takes an infinite number of possible values, and the probability of observing any single value is equal to 0, \(P(X = x) = 0\)

Therefore, instead of discussing the probability of \(X\) at a specific value \(x\), we deal with \(f(x)\), the probability density function of \(X\) at \(x\).

We cannot work with probabilities of \(X\) at specific values, but we can assign probabilities to intervals of values.

The probability of \(X\) being between \(a\) and \(b\) is given by the area under the probability density curve from \(a\) to \(b\).

\[P(a \leq X\leq b) = \int_a^b f(x)dx\]

The probability density function \(f(x)\) must satisfy the following:

- The probability density function has no negative values (\(f(x) \geq 0\) for all \(x\))

- Total area under the curve is equal to 1 (\(\int_{-\infty}^{-\infty} f(x)dx = 1\))

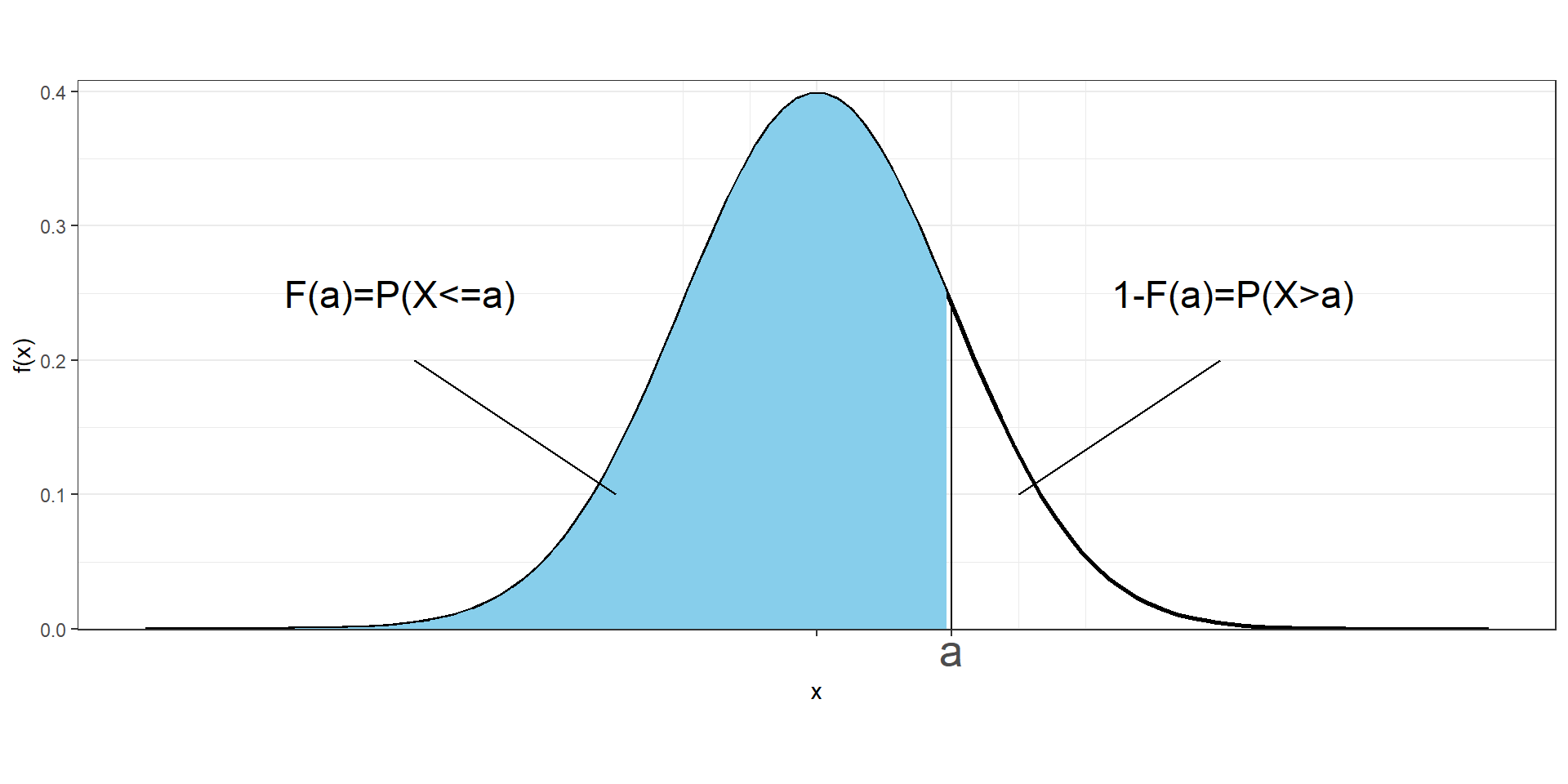

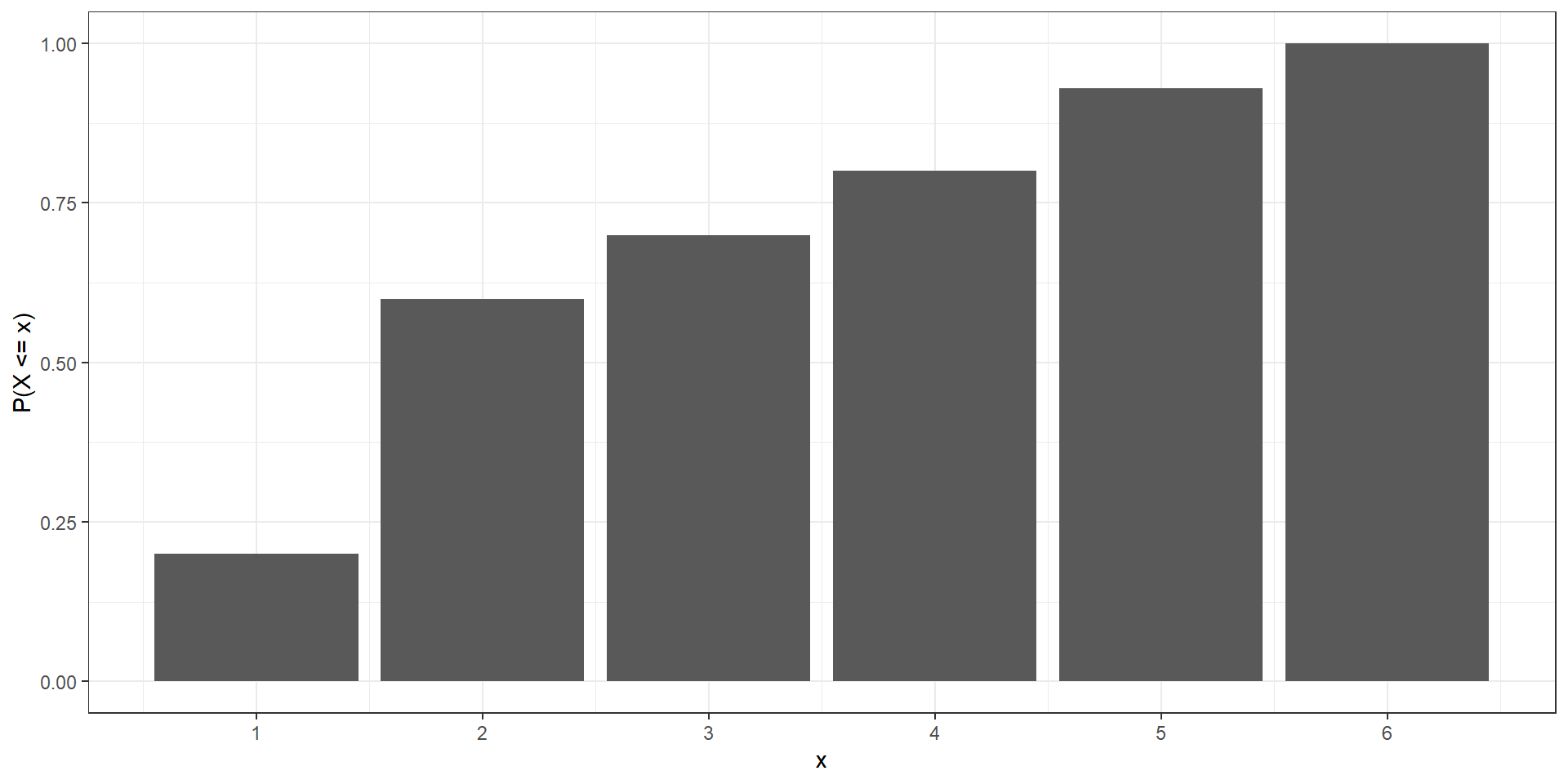

Cumulative distribution function

The cumulative distribution function (CDF) of a random variable \(X\) denotes the probability that \(X\) takes a value less than or equal to \(x\).

If \(X\) is discrete, the cumulative distribution function at a value \(x\) is calculated as the sum of the probabilities of the values that are less than or equal to \(x\):

\[F(x)=P(X\leq x) = \sum_{a\leq x}P(X=a)\]

Example

Calculate the cumulative distribution function for the discrete random variable given by the following probability mass function:

| - | ||||||

|---|---|---|---|---|---|---|

| Outcome | 1 | 2 | 3 | 4 | 5 | 6 |

| Probability | 0.20 | 0.40 | 0.10 | 0.10 | 0.13 | 0.07 |

Example

Calculate the cumulative distribution function for the discrete random variable given by the following probability mass function:

| - | ||||||

|---|---|---|---|---|---|---|

| Outcome | 1 | 2 | 3 | 4 | 5 | 6 |

| Probability | 0.20 | 0.40 | 0.10 | 0.10 | 0.13 | 0.07 |

\(F(1) = P(X \leq 1) =\)

\(F(2) = P(X \leq 2) =\)

\(F(3) = P(X \leq 3) =\)

\(F(4) = P(X \leq 4) =\)

\(F(5) = P(X \leq 5) =\)

\(F(6) = P(X \leq 6) =\)

Example

Calculate the cumulative distribution function for the discrete random variable given by the following probability mass function:

| - | ||||||

|---|---|---|---|---|---|---|

| Outcome | 1 | 2 | 3 | 4 | 5 | 6 |

| Probability | 0.20 | 0.40 | 0.10 | 0.10 | 0.13 | 0.07 |

\(F(1) = P(X \leq 1) = 0.20\)

\(F(2) = P(X \leq 2) = 0.20+0.40 = 0.60\)

\(F(3) = P(X \leq 3) = 0.20+0.40+0.10 = 0.70\)

\(F(4) = P(X \leq 4) = 0.20+0.40+0.10+0.10=0.80\)

\(F(5) = P(X \leq 5) = 0.20+0.40+0.10+0.10+0.13=0.93\)

\(F(6) = P(X \leq 6) = 0.20+0.40+0.10+0.10+0.13+0.07=1\)

Cumulative distribution function

If \(X\) is continuous, the cumulative distribution function at value \(x\) is calculated as the area under the probability density function to the left of \(x\)

\[F(x)=P(X\leq x) = \int_{-\infty}^x f(a)da\]

The probability that a continuous variable takes values between \(a\) and \(b\) is

\(P(a \leq X \leq b) = F(b) - F(a)\)

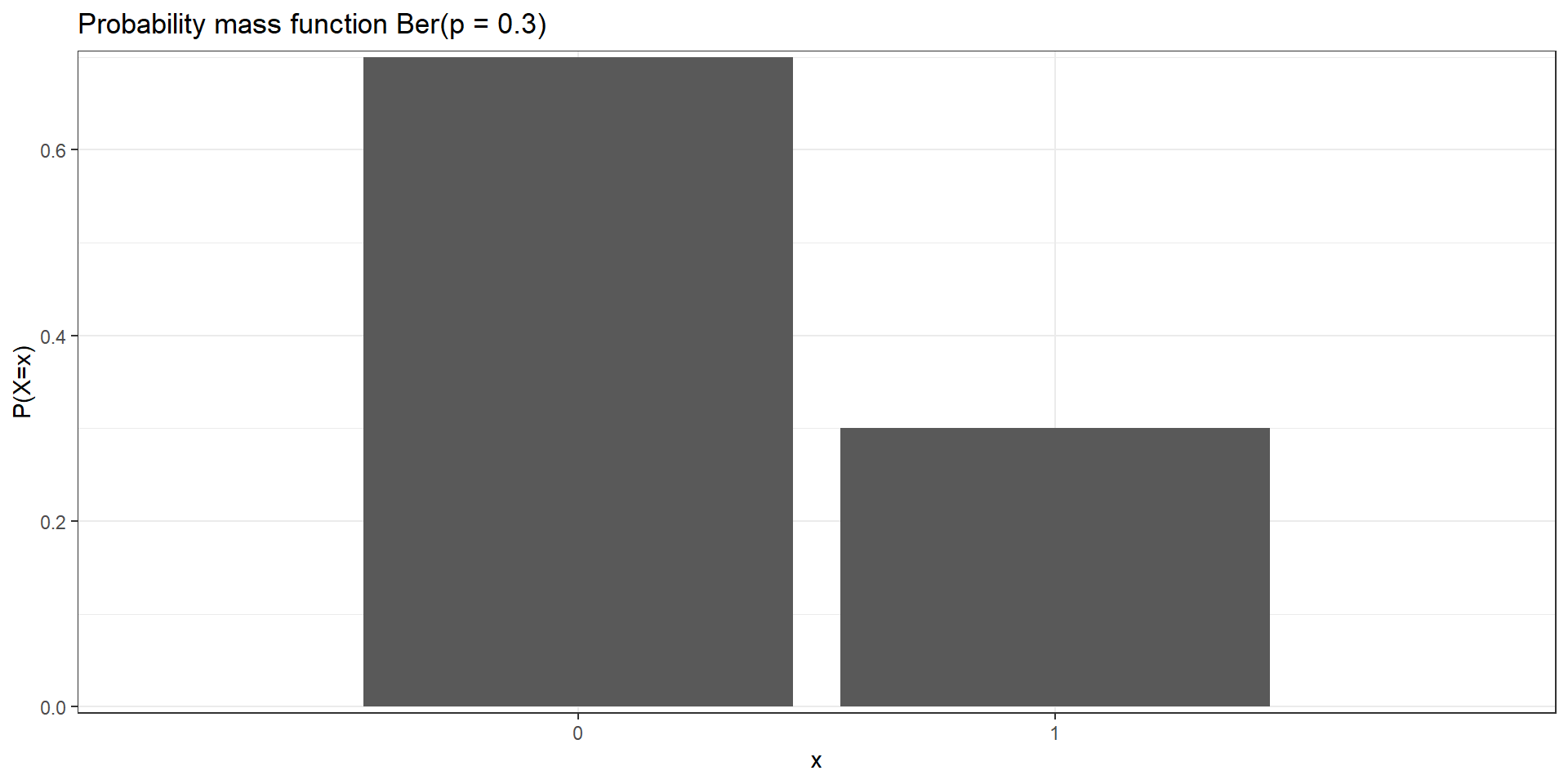

Bernoulli distribution

The Bernoulli distribution is used to describe experiments having exactly two outcomes (e.g., the toss of a coin will be head or tail, a person will test positive for a disease or not, or a political party will win an election or not).

\(X\) a random variable with Bernoulli distribution with probability of success \(p\) \[X \sim Ber(p)\]

- Trial can result in two possible outcomes, success (\(X=1\))/ failure (\(X=0\))

Probability mass function: \(P(X = x) = p^x (1-p)^{1-x},\ x \in \{0, 1\}\)

\(P(X=0) = p^0 (1-p)^{1-0}=1-p\)

\(P(X=1) = p^1 (1-p)^{0}=p\)Mean \(E[X]= \sum_{i} x_i P(X=x_i) = 0 (1-p)+ 1 p=p\)

Variance \(Var[X] = E[(X-E[X])^2] =\) \(= E[X^2]-E[X]^2 = 0^2 (1-p)+ 1^2 p - p^2 = p(1-p)\)

Example

\(X \sim Ber(p)\), \(p = 0.3\)

Probability mass function: \(P(X = x) = p^x (1-p)^{1-x},\ x \in \{0, 1\}\)

\(P(X=0) = p^0 (1-p)^{1-0}=1-p = 1 - 0.3 = 0.7\)

\(P(X=1) = p^1 (1-p)^{0}=p = 0.3\)

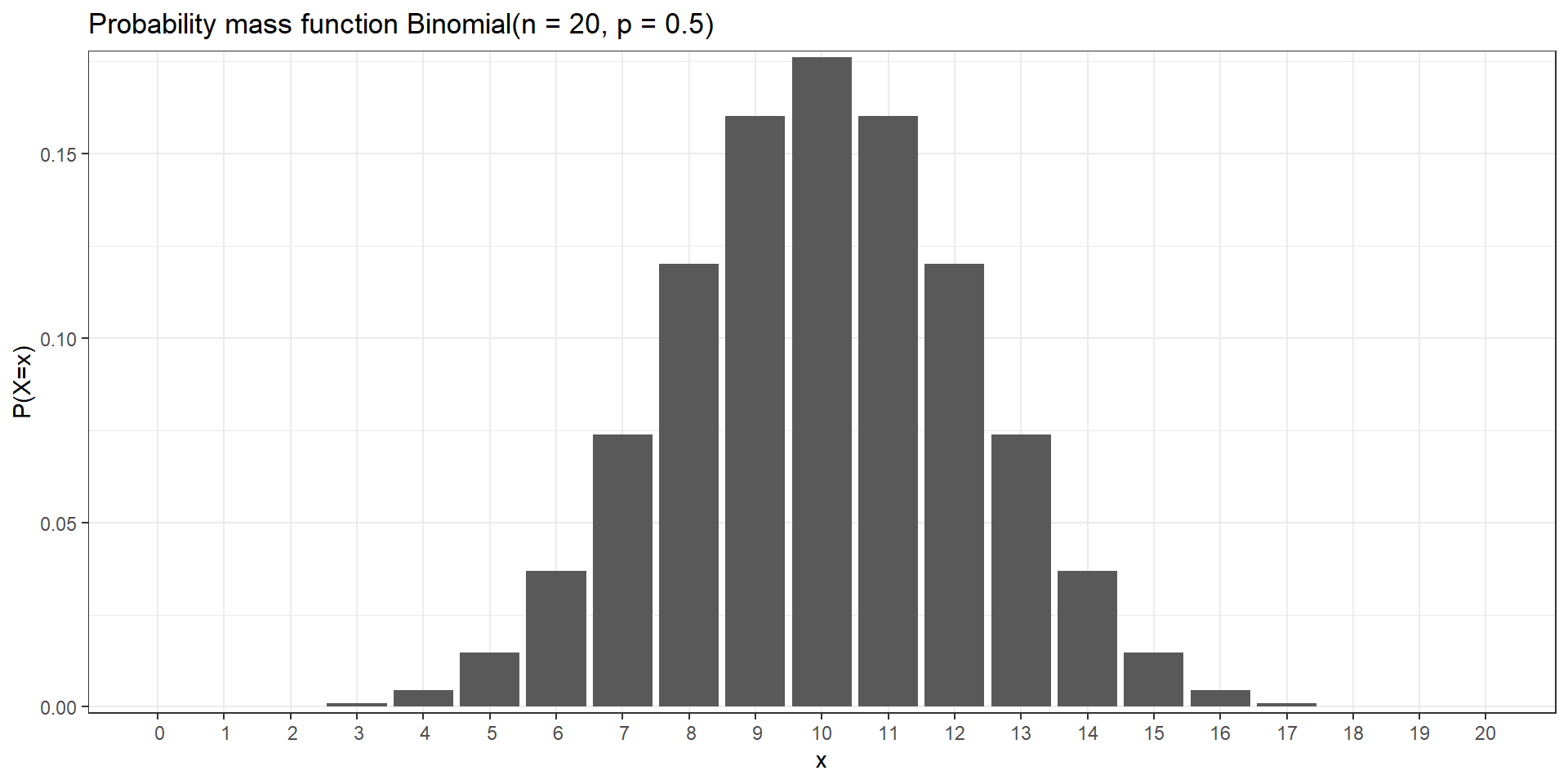

Binomial distribution

The binomial distribution is used to describe the number of successes in a fixed number of independent Bernoulli trials (e.g., number of heads when tossing a coin 20 times, number of defective pieces in a batch of 100 pieces)

\(X\) random variable with Binomial distribution with number of trials \(n\) and probability of success \(p\)

\[X \sim Binomial(n,p)\]

- \(x=0,1,2,\ldots,n\) number of successes. \(n\) number of trials fixed

- Probability of success \(p\) is the same from one trial to another

- Each trial is independent (none of the trials have an effect on the probability of the next trial)

- Probability mass function: \(P(X=x) = \binom{n}{x} p^x (1-p)^{n-x}\)

- Mean is \(n p\). Variance is \(n p (1-p)\)

Example

Probability mass function: \(P(X=x) = \binom{n}{x} p^x (1-p)^{n-x}\)

x y

0 9.536743e-07

1 1.907349e-05

2 1.811981e-04

3 1.087189e-03

4 4.620552e-03 x y

5 0.01478577

6 0.03696442

7 0.07392883

8 0.12013435

9 0.16017914 x y

10 0.17619705

11 0.16017914

12 0.12013435

13 0.07392883

14 0.03696442 x y

15 1.478577e-02

16 4.620552e-03

17 1.087189e-03

18 1.811981e-04

19 1.907349e-05

20 9.536743e-07

R syntax

| Purpose | Function | Example |

|---|---|---|

Generate n random values from a Binomial distribution |

rbinom(n, size, prob) |

rbinom(1000, 12, 0.25) generates 1000 values from a Binomial distribution with number of trials 12 and probability of success 0.25 |

| Probability Mass Function | dbinom(x, size, prob) |

dbinom(2, 12, 0.25) probability of obtaining 2 successes when the number of trials is 12 and the probability of success is 0.25 |

| Cumulative Distribution Function (CDF) | pbinom(q, size, prob) |

pbinom(2, 12, 0.25) probability of observing 2 or fewer successes when the number of trials is 12 and the probability of success is 0.25 |

Quantile Function (inverse of pbinom()) |

qbinom(p, size, prob) |

qbinom(0.98, 12, 0.25) value at which the CDF of the Binomial distribution with 12 trials and probability of success 0.25 is equal to 0.98 |

Example

Let us consider a biased coin that comes up heads with probability 0.7 when tossed. Let \(X\) be the random variable denoting the number of heads obtained when the coin is tossed

- What is the probability of obtaining 4 heads in 10 tosses?

- Calculate \(P(X \leq 4)\)

- Generate 3 random values from \(X \sim Bin(n = 10, p = 0.7)\)

\(X \sim Bin(n = 10, p = 0.7)\)

- What is the probability of obtaining 4 heads in 10 tosses? \(P(X = 4)\)

- Calculate \(P(X \leq 4)\)

\(P(X \leq 4) = P(X = 0)+P(X = 1)+P(X = 2)+P(X = 3)+P(X = 4)\)

dbinom(0, size = 10, prob = 0.7) + dbinom(1, size = 10, prob = 0.7) + dbinom(2, size = 10, prob = 0.7) +

dbinom(3, size = 10, prob = 0.7) + dbinom(4, size = 10, prob = 0.7)[1] 0.04734899Alternatively, using the cumulative distribution function

Normal distribution

The Normal distribution is used to model many procesess in nature and industry (e.g., heights, IQ scores, package delivery time, stock volatility)

\(X\) random variable with a normal distribution with mean \(\mu\) and variance \(\sigma^2\)

\[X \sim N(\mu, \sigma^2)\]

- \(x \in \mathbb{R}\)

- Probability density function: \(\displaystyle{f(x)=\frac{1}{\sigma \sqrt{2\pi}} exp^{-(x-\mu)^2/2 \sigma^2}}\)

Bell-shaped density function. Single peak at the mean (most data values occur around the mean). Symmetrical, centered at the mean

\(\mu \in \mathbb{R}\) represents the mean and the median of the normal distribution. The normal distribution is symmetric about the mean. Most values are around the mean, and half of the values are above the mean and half of the values below the mean. Changing the mean shifts the bell curve to the left or right.

\(\sigma>0\) standard deviation (\(\sigma^2>0\) variance). The standard deviation denotes how spread the data are. Changing the standard deviation stretches or constricts the curve.

Standard Normal distribution

The standard normal distribution is a normal distribution with mean 0 and variance 1.

The standard normal distribution is represented with the letter \(Z\). \[Z \sim N(\mu=0, \sigma^2=1)\]

If \(X \sim N(\mu, \sigma^2)\), then \(\displaystyle{Z = \frac{X-\mu}{\sigma} \sim N(0, 1)}\)

68-95-99.7 rule

The normal distribution is symmetric about the mean \(\mu\). Total area under probability density curve is 1. Probability below the mean is 0.5 and above 0.5.

Approximately 68% of the area lies between one standard deviation below the mean (\(\mu-\sigma\)) and one standard deviation above the mean (\(\mu+\sigma\)). Approximately 68% of data lies within 1 standard deviation from the mean.

Approximately 95% of data lies within 2 standard deviations from the mean.

Approximately 99.7% of data lies within 3 standard deviations from mean.

R syntax

| Purpose | Function | Example |

|---|---|---|

Generate n random values from a Normal distribution |

rnorm(n, mean, sd) |

rnorm(1000, 2, .25) generates 1000 values from a Normal distribution with mean 2 and standard deviation 0.25 |

| Probability Density Function | dnorm(x, mean, sd) |

dnorm(0, 0, 0.5) density at value 0 (height of the probability density function at value 0) of the Normal distribution with mean 0 and standard deviation 0.5. |

| Cumulative Distribution Function (CDF) | pnorm(q, mean, sd) |

pnorm(1.96, 0, 1) area under the density function of the standard normal to the left of 1.96 (= 0.975) |

Quantile Function (inverse of pnorm()) |

qnorm(p, mean, sd) |

qnorm(0.975, 0, 1) value at which the CDF of the standard normal distribution is equal to 0.975 ( = 1.96) |

Example

Consider a random variable \(X \sim N(\mu=100, \sigma=15)\). Calculate:

- \(P(X < 125)\)

- \(P(X \geq 110)\)

- \(P(110 < X < 125)\)

Example

Consider a random variable \(X \sim N(\mu=100, \sigma=15)\). Calculate:

- \(P(X < 125)\)

Percentiles and quantiles

The \(k\)th percentile is the value \(x\) such that \(P(X < x) = k/100\).

The \(k\)th percentile of a set of values divides them so that \(k\)% of the values lie below and (100-\(k\))% of the values lie above.

Quantiles are the same as percentiles, but are indexed by sample fractions rather than by sample percentages (e.g., 10th percentile or 0.10 quantile).

Example

The mean Body mass index (BMI) for men aged 60 is 29 with a standard deviation of 6. BMI is expressed as kg/m^2. \(X \sim N(\mu=29, \sigma = 6)\)

- What is the 90th percentile (or quantile 0.90)?

The 90th percentile is the value \(x\) such that \(P(X < x) = 0.90\).

The 90th percentile is 36.69. This means 90% of the BMIs in men aged 60 are below 36.69. 10% of the BMIs in men aged 60 are above 36.69.

- Find percentile 25th (or quantile 0.25).

Value \(x\) such that \(P(X < x) = 0.25\).

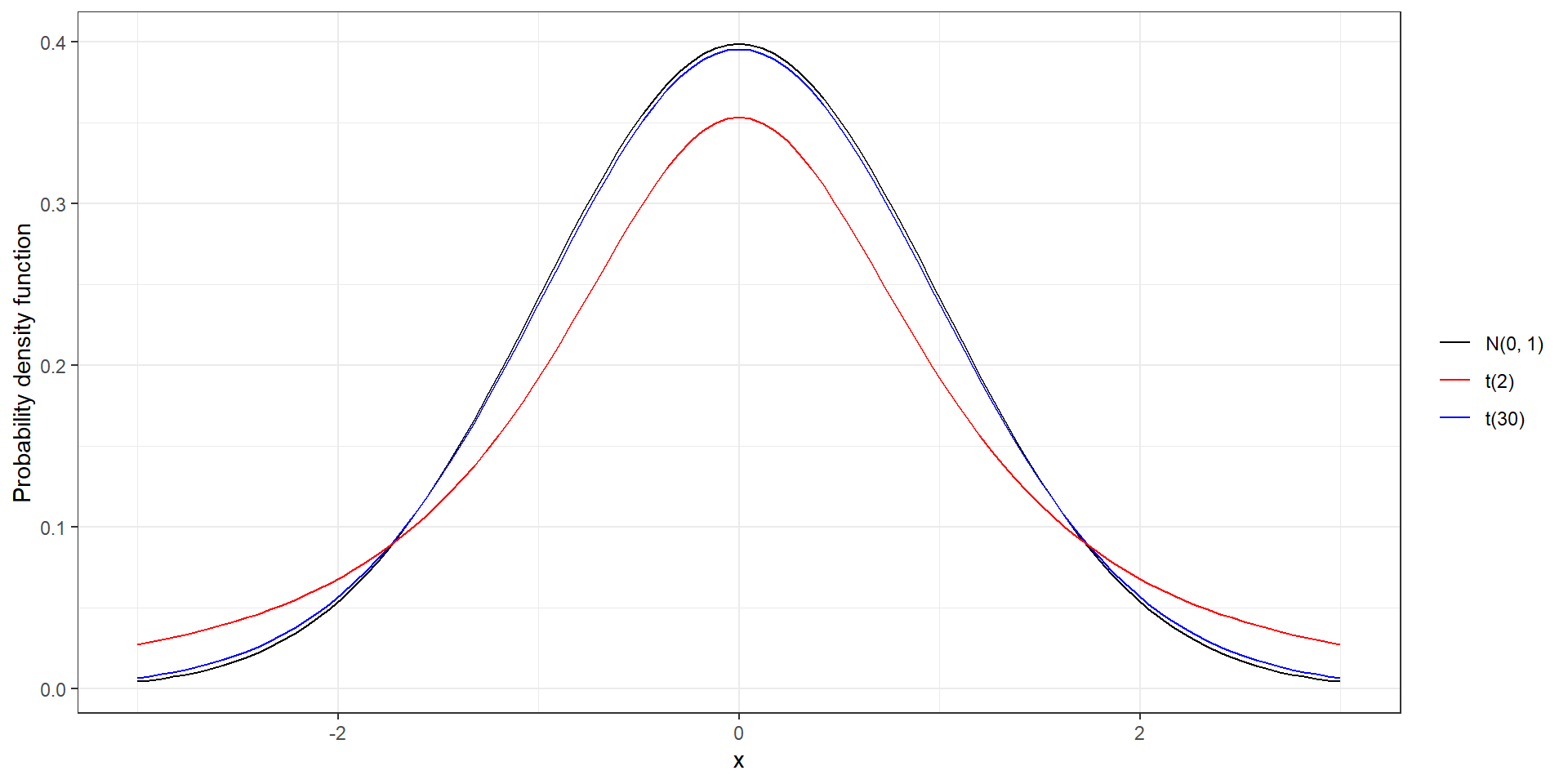

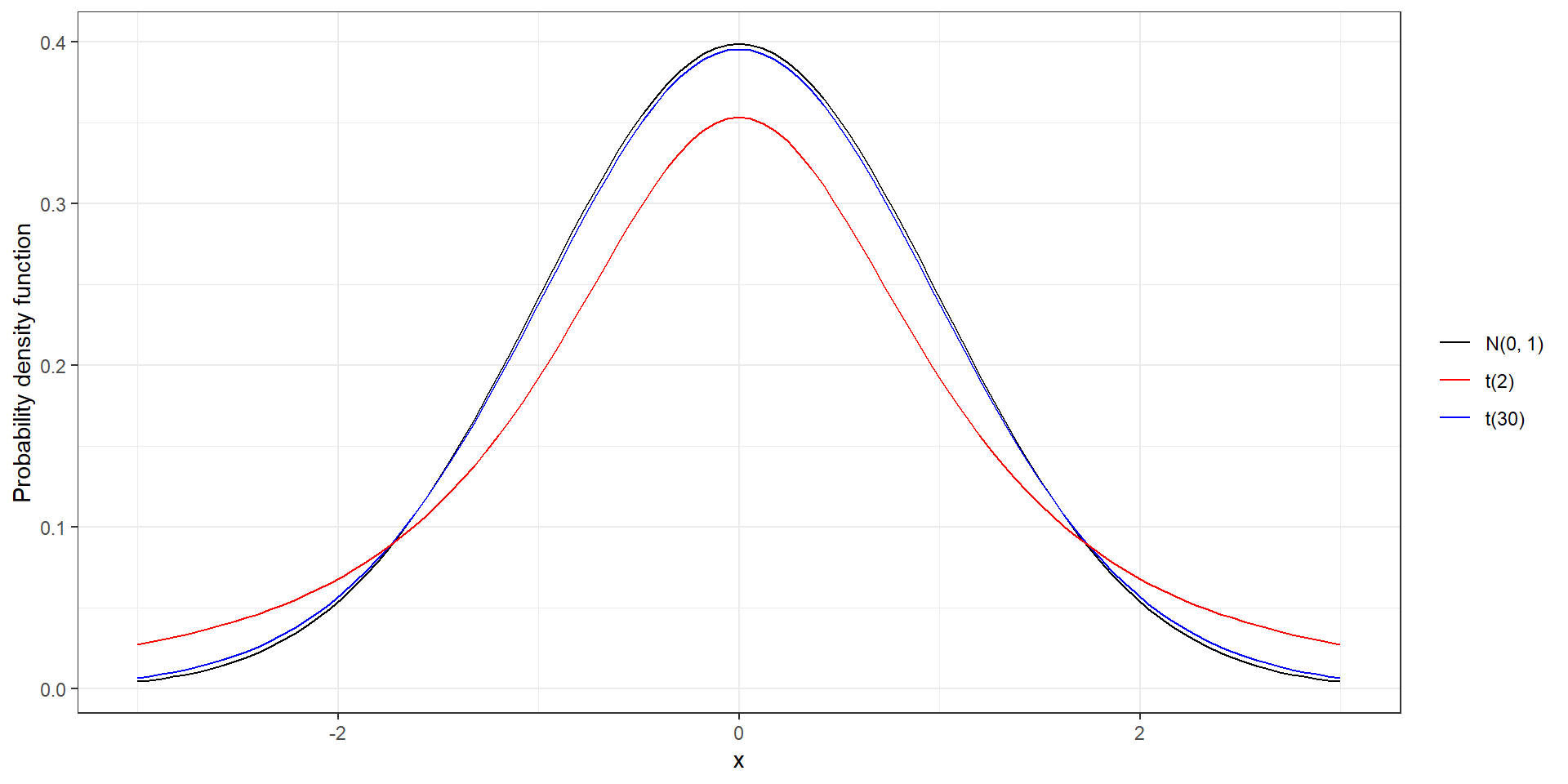

t distribution

- Mean 0. Symmetric and bell-shaped like the normal distribution.

- Heavier tails than normal distribution, observations more likely to fall beyond two standard deviations from the mean than under the normal

The t distribution has a parameter called degrees of freedom (df). The df describes the form of the t-distribution. When the number of degrees of freedom increases, the t-distribution approaches the standard normal, N(0, 1)

R syntax

| Purpose | Function | Example |

|---|---|---|

Generate n random values from a t distribution |

rt(n, df) |

rt(1000, 2) generates 1000 values from a t distribution with 2 degrees of freedom |

| Probability Density Function (PDF) | dt(x, df) |

dt(1, 2) density at value 1 (height of the PDF at value 1) of the t distribution with 2 degrees of freedom |

| Cumulative Distribution Function (CDF) | pt(q, df) |

pt(5, 2) area under the density function of the t(2) to the left of 5 |

Quantile Function (inverse of pt()) |

qt(p, df) |

qt(0.97, 2) value at which the CDF of the t(2) distribution is equal to 0.97 |

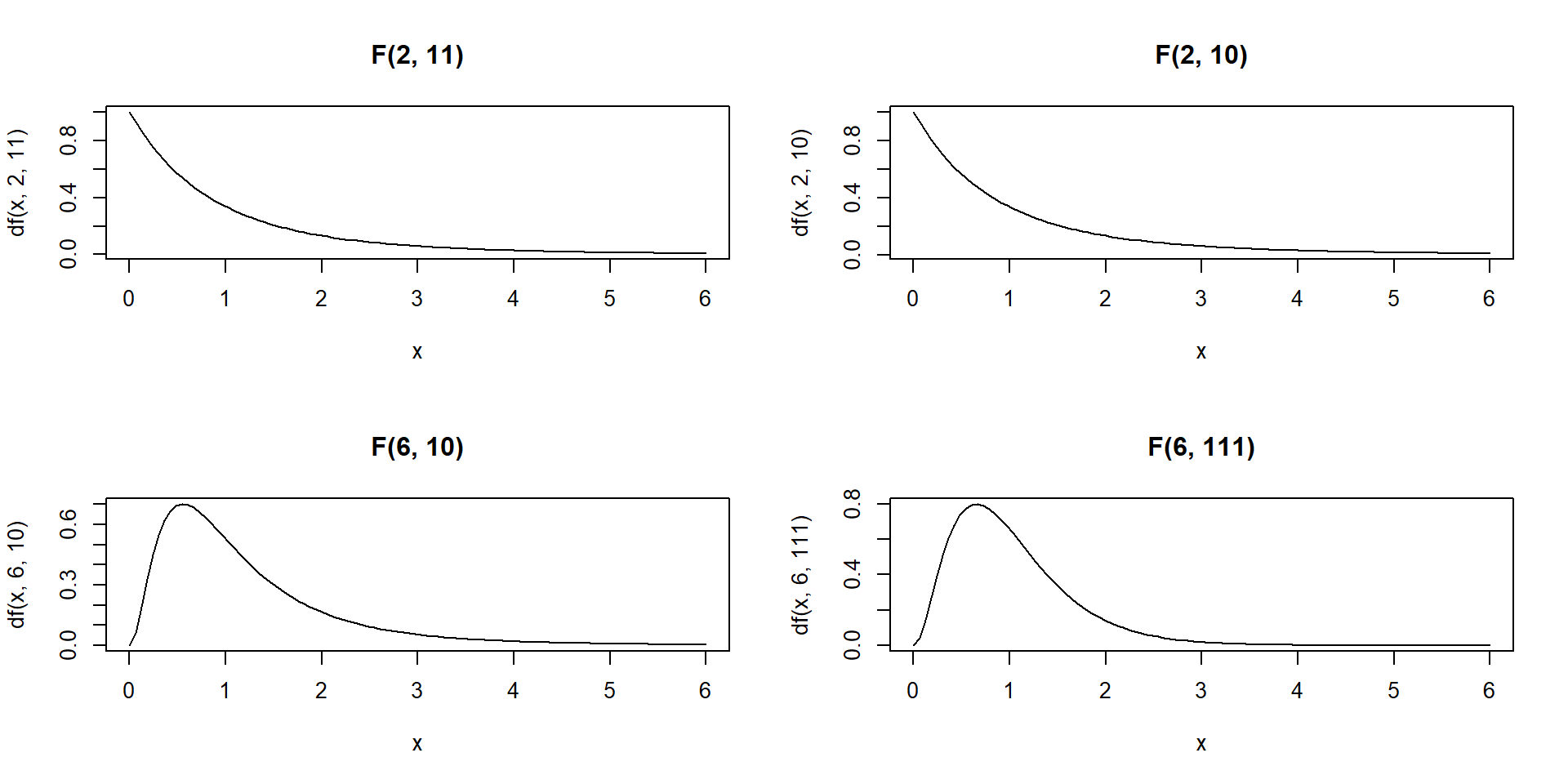

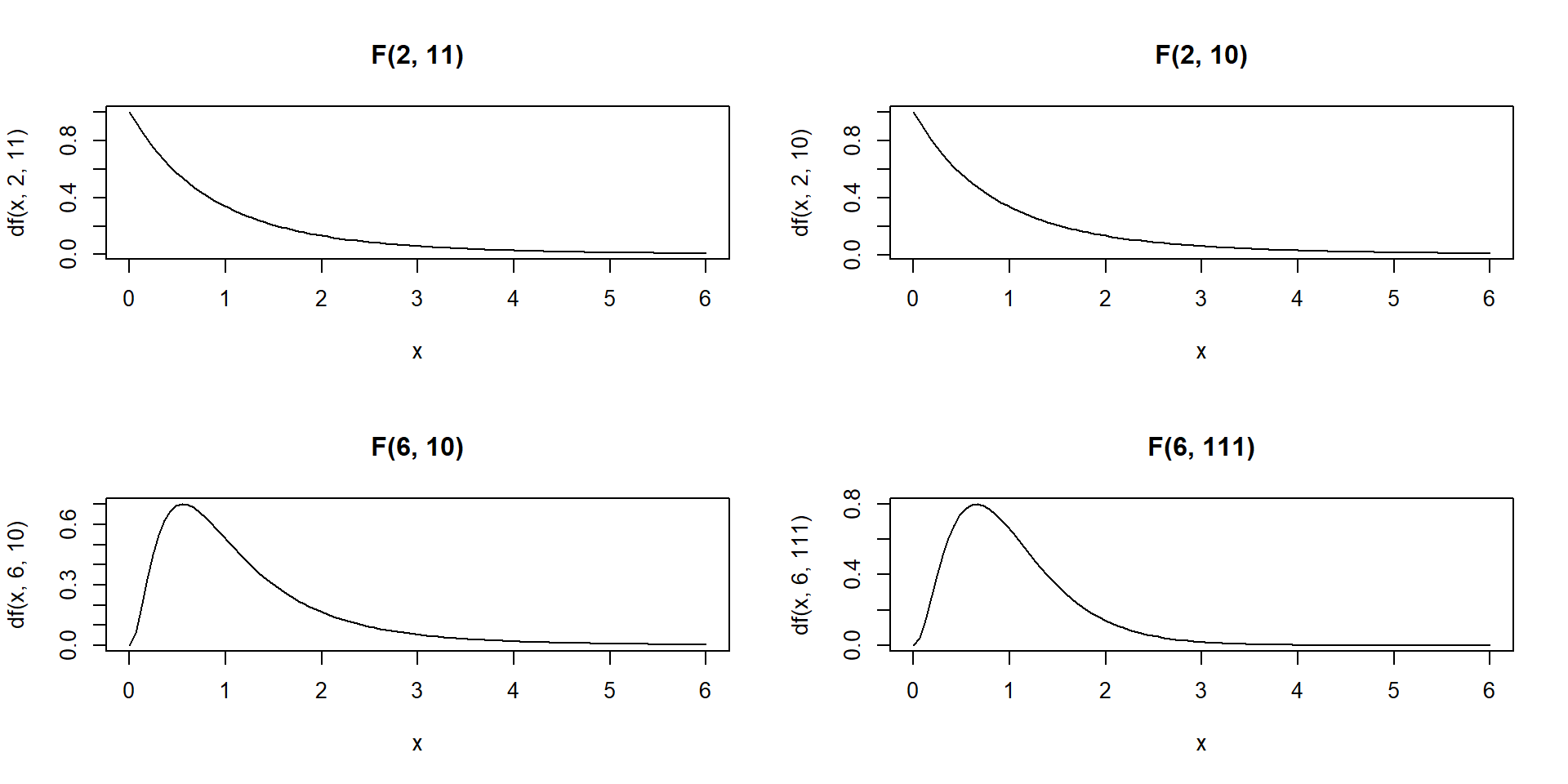

F distribution

The F distribution is usually defined as the ratio of variances of two populations normally distributed. The F distribution depends on two parameters: the degrees of freedom of the numerator and the degrees of freedom of the denominator.

The F-distribution is always \(\geq 0\) since it is the ratio of variances and variances are squares of deviations and hence are non-negative numbers.

Density is skewed to the right

Shape changes depending on the numerator and denominator degrees of freedom

As the degrees of freedom for the numerator and denominator get larger, the density approximates the normal

In ANOVA we always use the right-tailed area to calculate p-values

Density curves of four different F-distributions:

par(mfrow = c(2, 2)) # to draw figures in a 2 by 2 array on the device

x <- seq(0, 6, length.out = 100)

plot(x, df(x, 2, 11), main = "F(2, 11)", type = "l")

plot(x, df(x, 2, 10), main = "F(2, 10)", type = "l")

plot(x, df(x, 6, 10), main = "F(6, 10)", type = "l")

plot(x, df(x, 6, 111), main = "F(6, 111)", type = "l")

R syntax

| Purpose | Function | Example |

|---|---|---|

Generate n random values from a F distribution |

rf(n, df1, df2) |

rf(1000, 2, 11) generates 1000 values from a F distribution with degrees of freedom 2 and 11 |

| Probability Density Function (PDF) | df(x, df1, df2) |

df(1, 2, 11) density at value 1 (height of the PDF at value 1) of the F distribution with degrees of freedom 2 and 11 |

| Cumulative Distribution Function (CDF) | pf(q, df1, df2) |

pf(5, 2, 11) area under the density function of the F(2,11) to the left of 5 |

Quantile Function (inverse of pf()) |

qf(p, df1, df2) |

qf(0.97, 2, 11) value at which the CDF of the F(2,11) distribution is equal to 0.97 |

Find the 95th percentile (or quantile 0.95) of the F distribution with 2 and 11 degrees of freedom.

Descriptive analysis

Descriptive statistics for continuous variables

Daily air quality measurements in New York, May to September 1973

?airquality

Ozone Solar.R Wind Temp Month Day

1 41 190 7.4 67 5 1

2 36 118 8.0 72 5 2

3 12 149 12.6 74 5 3

4 18 313 11.5 62 5 4

5 NA NA 14.3 56 5 5

6 28 NA 14.9 66 5 6Daily temperature values

[1] 67 72 74 62 56 66 65 59 61 69 74 69 66 68 58 64 66 57 68 62 59 73 61 61 57

[26] 58 57 67 81 79 76 78 74 67 84 85 79 82 87 90 87 93 92 82 80 79 77 72 65 73

[51] 76 77 76 76 76 75 78 73 80 77 83 84 85 81 84 83 83 88 92 92 89 82 73 81 91

[76] 80 81 82 84 87 85 74 81 82 86 85 82 86 88 86 83 81 81 81 82 86 85 87 89 90

[101] 90 92 86 86 82 80 79 77 79 76 78 78 77 72 75 79 81 86 88 97 94 96 94 91 92

[126] 93 93 87 84 80 78 75 73 81 76 77 71 71 78 67 76 68 82 64 71 81 69 63 70 77

[151] 75 76 68Measures of central tendency

There are three common measures of central tendency, all of which try to answer the basic question of which value is the most “typical.” These are the mean (average of all observations), median (middle observation), and mode (appears most often).

Sample Mean \[\bar x = \frac{\sum_{i=1}^n x_i}{n}\]

Variability

The central tendencies give a sense of the most typical values but do not provide with information on the variability of the values. Variability measures provide understanding of how the values are spread out.

Range corresponds to maximum value minus the minimum value.

Percentiles and quartiles answer questions like this: What is the temperature value such that a given percentage of temperatures is below it?

Specifically, for any percentage p, the pth percentile is the value such that a percentage p of all values are less than it. Similarly, the first, second, and third quartiles are the percentiles corresponding to p=25%, p=50%, and p=75%. These three values divide the data into four groups, each with (approximately) a quarter of all observations. Note that the second quartile is the median.

Quantiles are the same as percentiles, but are indexed by sample fractions rather than by sample percentages.

# default quantile() percentiles are 0% (minimum), 25%, 50%, 75%, and 100% (maximum)

# provides same output as fivenum()

quantile(d$Temp, na.rm = TRUE) 0% 25% 50% 75% 100%

56 72 79 85 97 # we can customize quantile() for specific percentiles

quantile(d$Temp, probs = seq(from = 0, to = 1, by = .1), na.rm = TRUE) 0% 10% 20% 30% 40% 50% 60% 70% 80% 90% 100%

56.0 64.2 69.0 74.0 76.8 79.0 81.0 83.0 86.0 90.0 97.0 Interquartile range (IQR) corresponds to the difference between the first and third quartiles.

An alternative approach is to use the summary() function used to produce min, 1st quantile, median, mean, 3rd quantile, and max summary measures.

Variance. Although the range provides a measure of variability and percentiles/quartiles provide an understanding of divisions of the data, the most common measures to summarize variability are the variance and the standard deviation. They measure how spread out values are around their average.

\[s^2 = \frac{1}{n-1} \sum_{i=1}^{n} (x_i - \bar{x})^2\]

\[s = \sqrt{\frac{1}{n-1} \sum_{i=1}^{n} (x_i - \bar{x})^2}\]

Summaries data frame

We can also get summary statistics for multiple columns at once, using the apply() function.

Ozone Solar.R Wind Temp Month Day

42.129310 185.931507 9.957516 77.882353 6.993464 15.803922 Summaries on the whole data frame.

Ozone Solar.R Wind Temp

Min. : 1.00 Min. : 7.0 Min. : 1.700 Min. :56.00

1st Qu.: 18.00 1st Qu.:115.8 1st Qu.: 7.400 1st Qu.:72.00

Median : 31.50 Median :205.0 Median : 9.700 Median :79.00

Mean : 42.13 Mean :185.9 Mean : 9.958 Mean :77.88

3rd Qu.: 63.25 3rd Qu.:258.8 3rd Qu.:11.500 3rd Qu.:85.00

Max. :168.00 Max. :334.0 Max. :20.700 Max. :97.00

NA's :37 NA's :7

Month Day

Min. :5.000 Min. : 1.0

1st Qu.:6.000 1st Qu.: 8.0

Median :7.000 Median :16.0

Mean :6.993 Mean :15.8

3rd Qu.:8.000 3rd Qu.:23.0

Max. :9.000 Max. :31.0

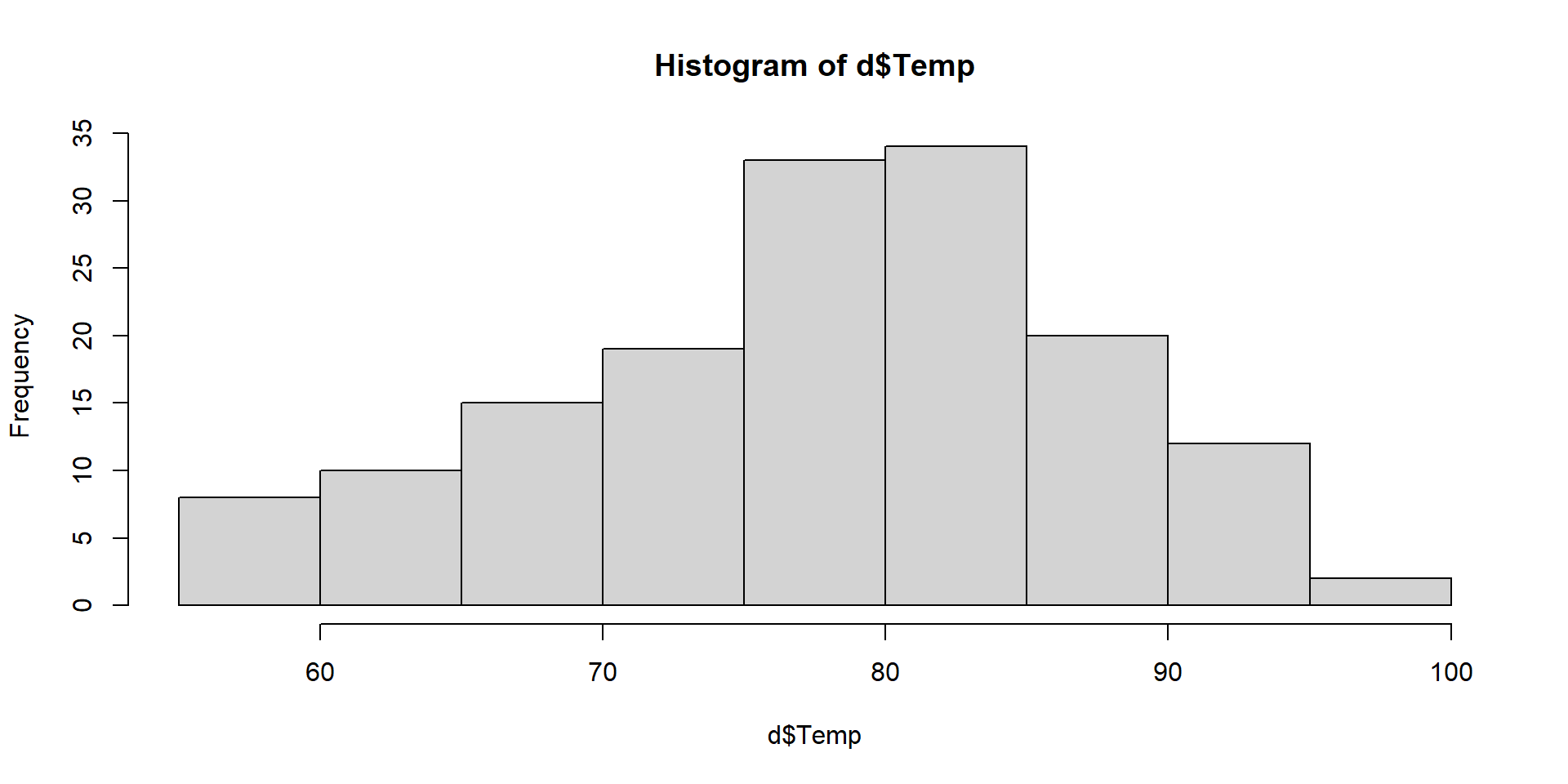

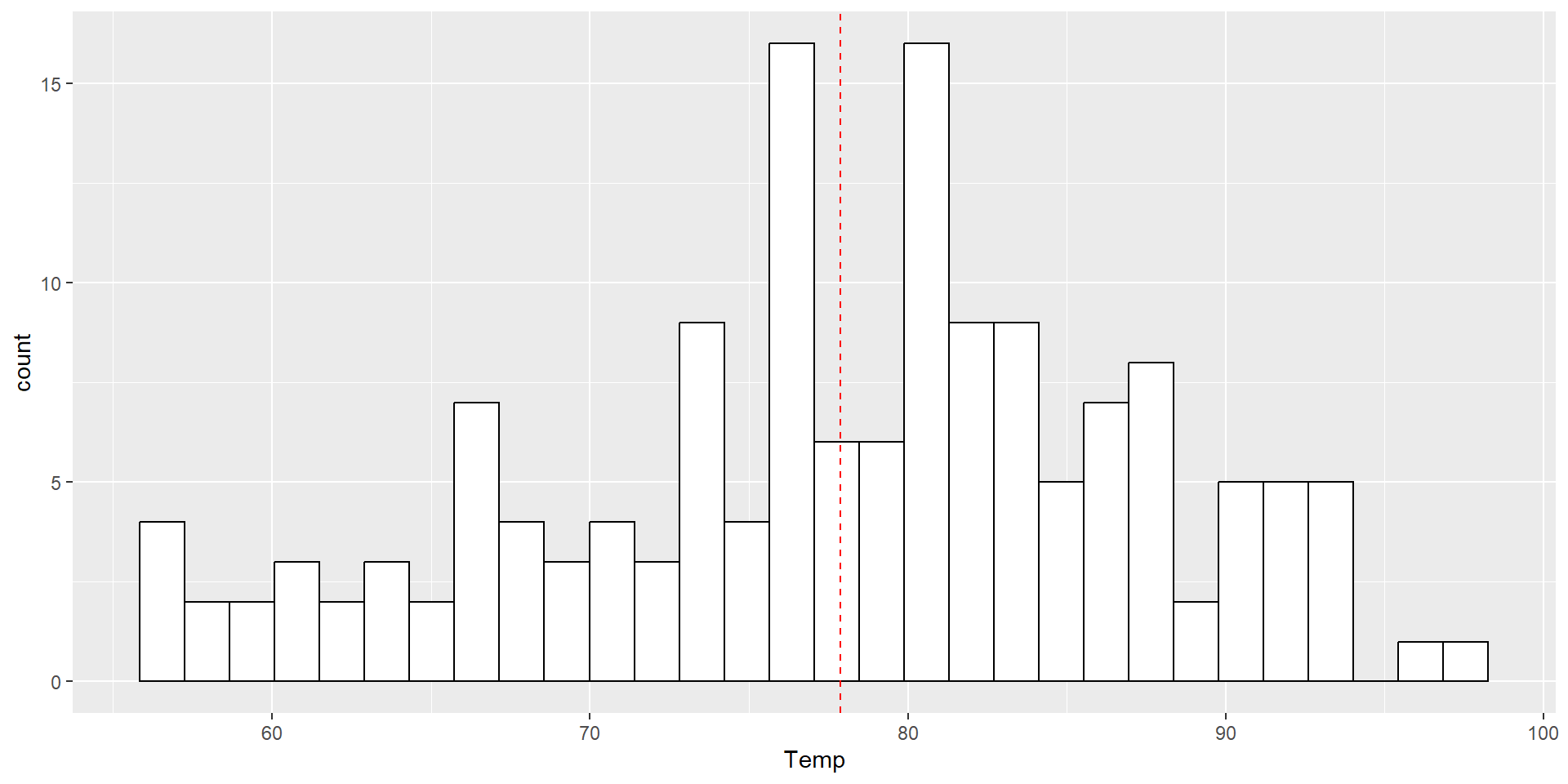

Visualization

Histograms display a 1D distribution by dividing into bins and counting the number of observations in each bin. Whereas the previously discussed summary measures - mean, median, standard deviation, skewness - describes only one aspect of a numerical variable, a histogram provides the complete picture by illustrating the center of the distribution, the variability, skewness, and other aspects in one convenient chart.

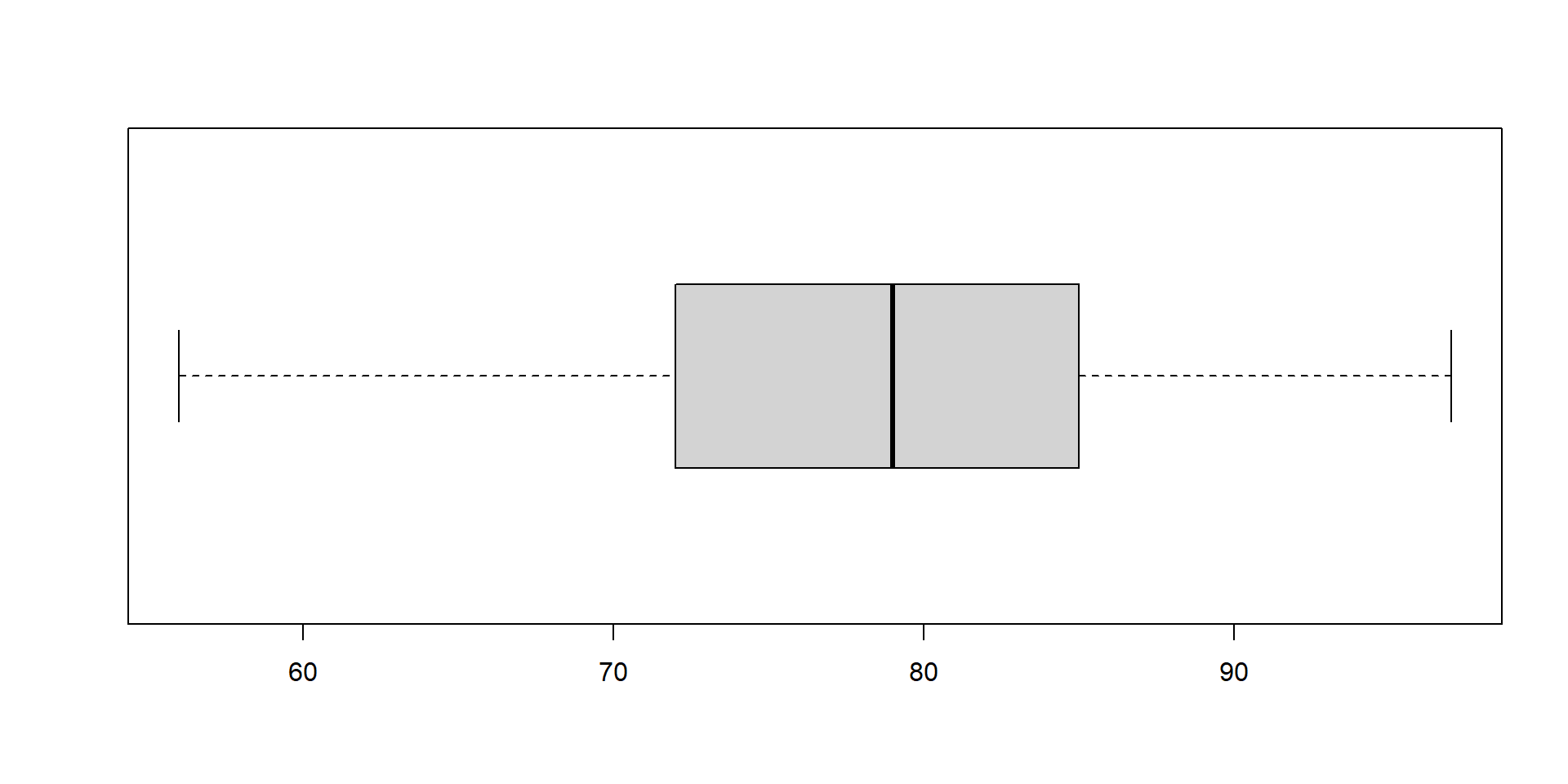

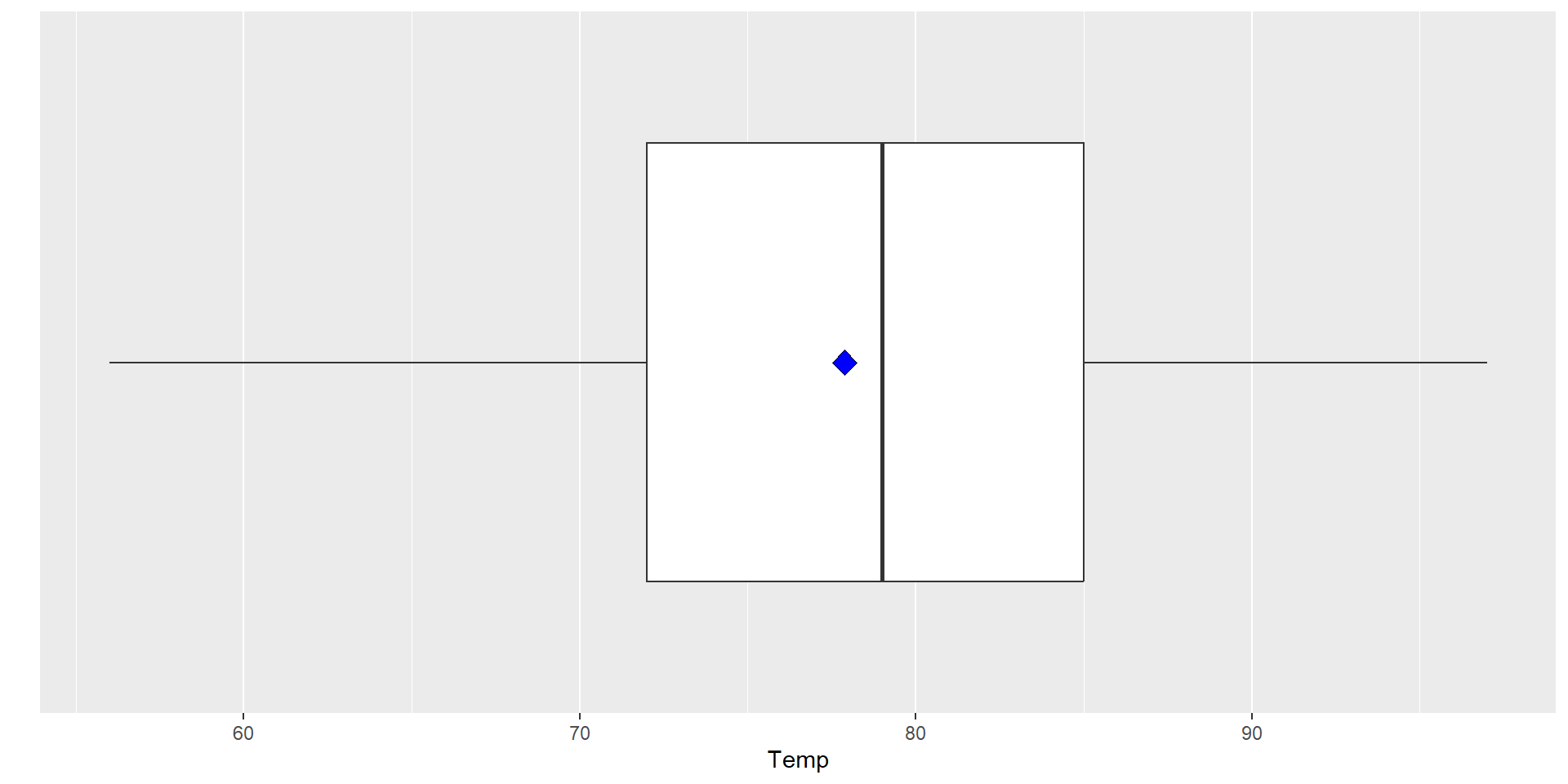

Boxplots

Boxplots are an alternative way to illustrate the distribution of a variable and is a concise way to illustrate the standard quantiles, shape, and outliers of data. The box itself extends from the first quartile to the third quartile. This means that it contains the middle half of the data. The line inside the box is positioned at the median. The lines (whiskers) coming out either side of the box extend to 1.5 interquartile ranges (IQRs) from the quartiles. These generally include most of the data outside the box. More distant values, called outliers, are denoted separately by individual points.

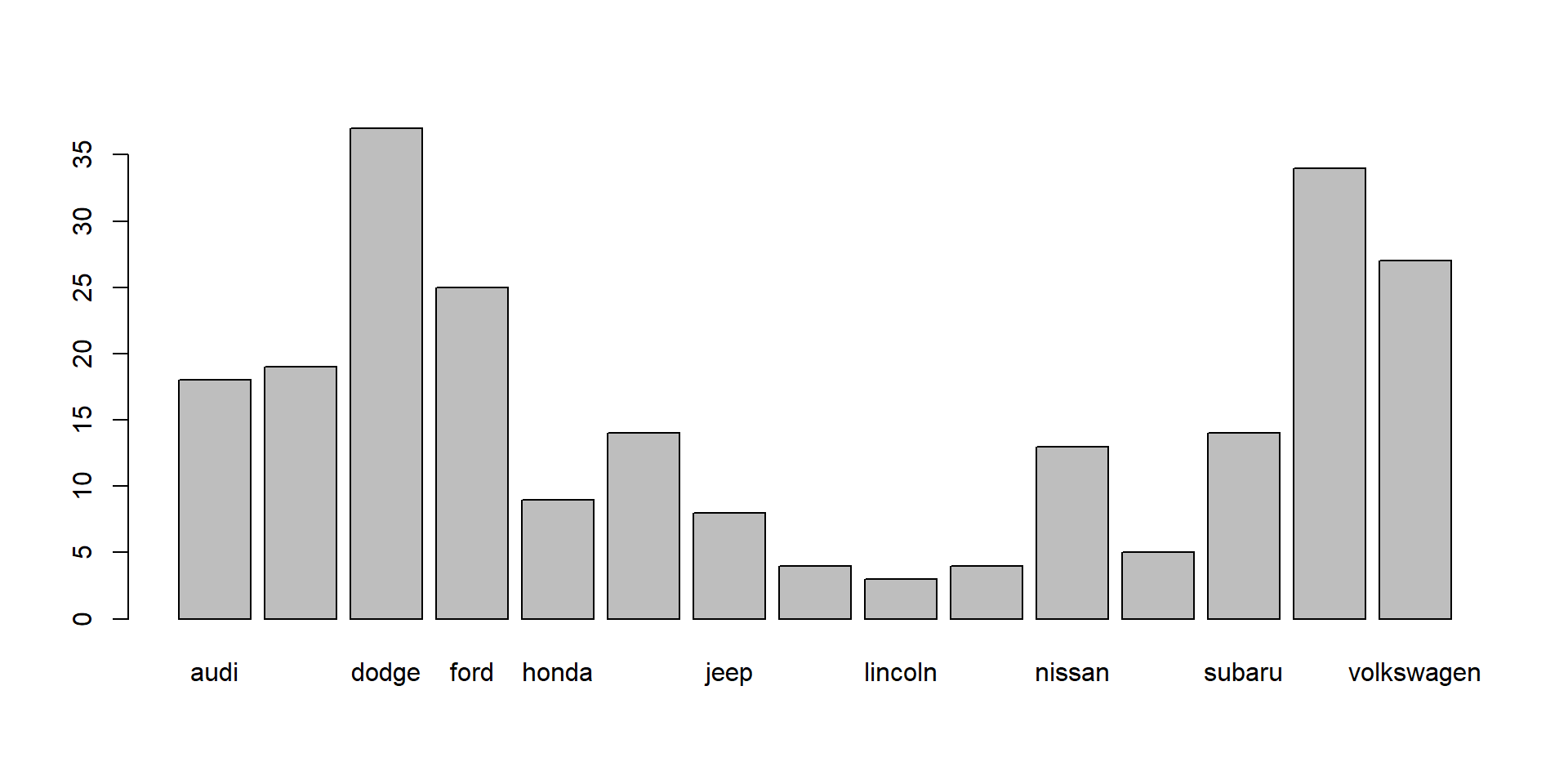

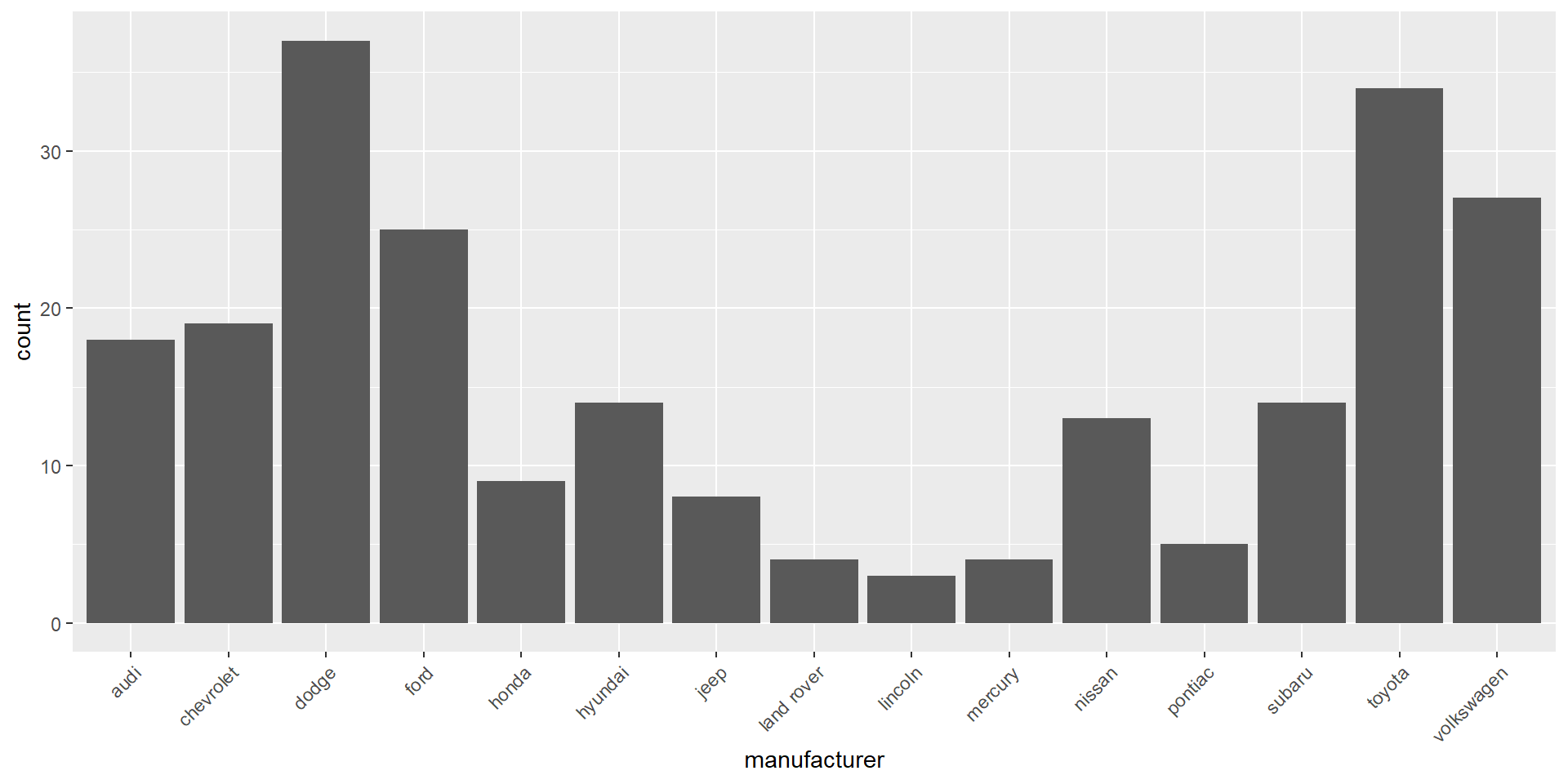

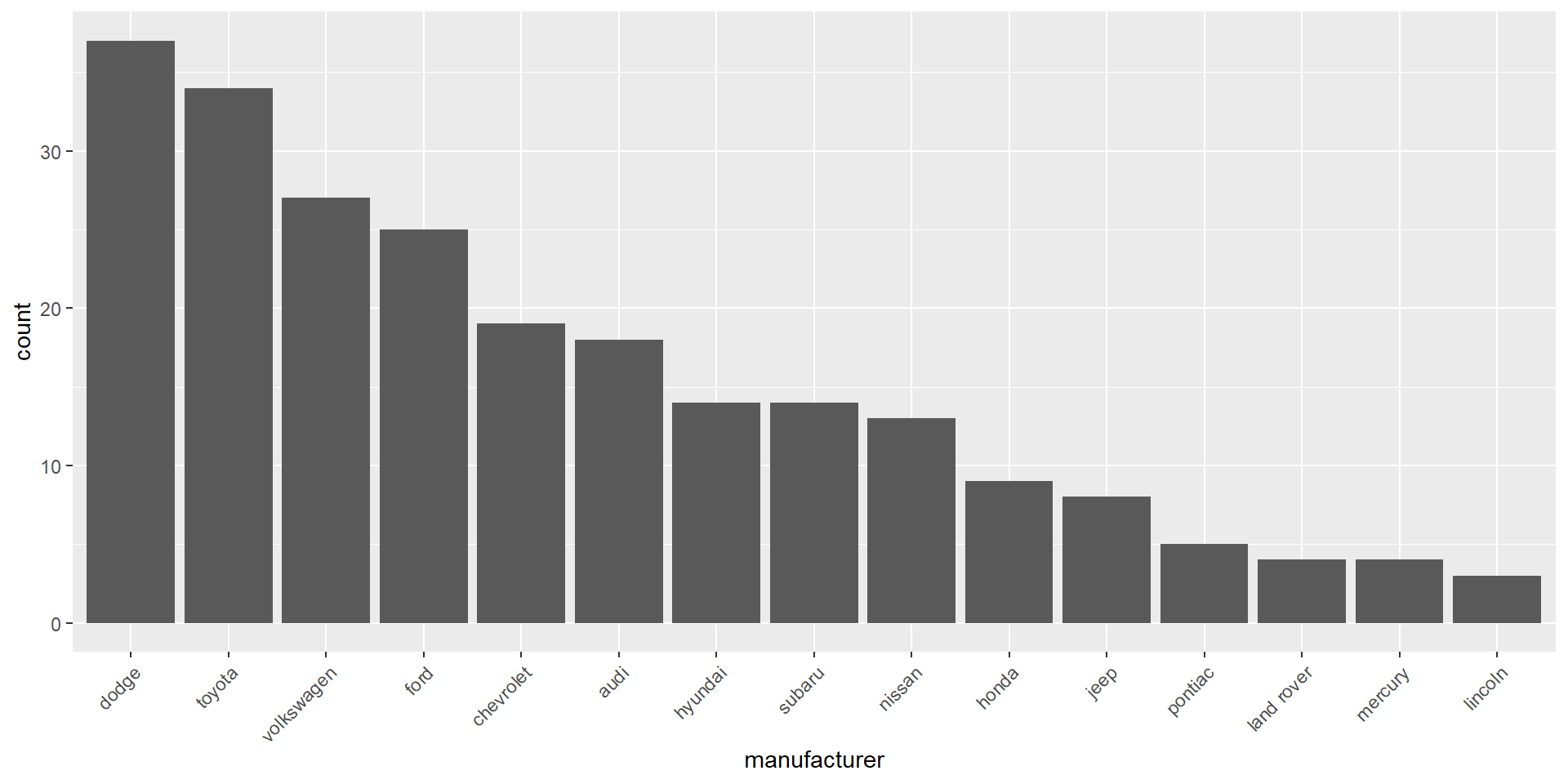

Descriptive statistics for discrete variables

Fuel economy data from 1999 to 2008 for 38 popular models of cars

?ggplot2::mpg

# A tibble: 6 × 11

manufacturer model displ year cyl trans drv cty hwy fl class

<chr> <chr> <dbl> <int> <int> <chr> <chr> <int> <int> <chr> <chr>

1 audi a4 1.8 1999 4 auto(l5) f 18 29 p compa…

2 audi a4 1.8 1999 4 manual(m5) f 21 29 p compa…

3 audi a4 2 2008 4 manual(m6) f 20 31 p compa…

4 audi a4 2 2008 4 auto(av) f 21 30 p compa…

5 audi a4 2.8 1999 6 auto(l5) f 16 26 p compa…

6 audi a4 2.8 1999 6 manual(m5) f 18 26 p compa…Frequencies and proportions for categorical variables

audi chevrolet dodge ford honda hyundai jeep

18 19 37 25 9 14 8

land rover lincoln mercury nissan pontiac subaru toyota

4 3 4 13 5 14 34

volkswagen

27

audi chevrolet dodge ford honda hyundai jeep

0.07692308 0.08119658 0.15811966 0.10683761 0.03846154 0.05982906 0.03418803

land rover lincoln mercury nissan pontiac subaru toyota

0.01709402 0.01282051 0.01709402 0.05555556 0.02136752 0.05982906 0.14529915

volkswagen

0.11538462 Visualization

Bar charts are most often used to visualize categorical variables. Here we can assess the count of manufacturers:

To make the bar chart easier to examine we can reorder the bars in descending order.

Pairs of continuous variables

Motor trend car road tests. mtcars

?mtcars

mpg cyl disp hp drat wt qsec vs am gear carb

Mazda RX4 21.0 6 160 110 3.90 2.620 16.46 0 1 4 4

Mazda RX4 Wag 21.0 6 160 110 3.90 2.875 17.02 0 1 4 4

Datsun 710 22.8 4 108 93 3.85 2.320 18.61 1 1 4 1

Hornet 4 Drive 21.4 6 258 110 3.08 3.215 19.44 1 0 3 1

Hornet Sportabout 18.7 8 360 175 3.15 3.440 17.02 0 0 3 2

Valiant 18.1 6 225 105 2.76 3.460 20.22 1 0 3 1Data frame with 32 observations on 11 variables.

- mpg Miles/(US) gallon

- cyl Number of cylinders

- disp Displacement (cu.in.)

- hp Gross horsepower

- drat Rear axle ratio

- wt Weight (1000 lbs)

- qsec 1/4 mile time

- vs Engine (0 = V-shaped, 1 = straight)

- am Transmission (0 = automatic, 1 = manual)

- gear Number of forward gears

- carb Number of carburetors

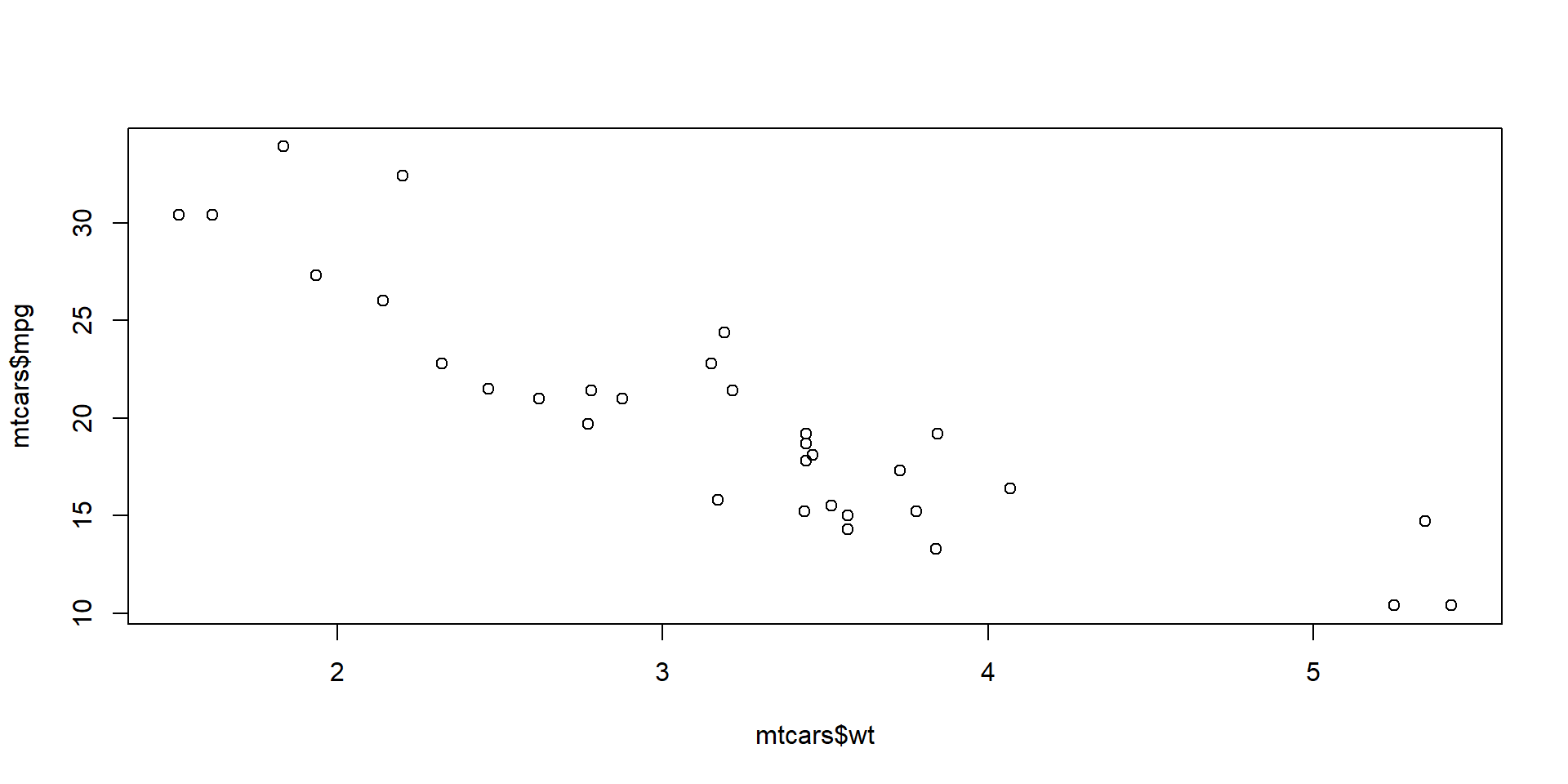

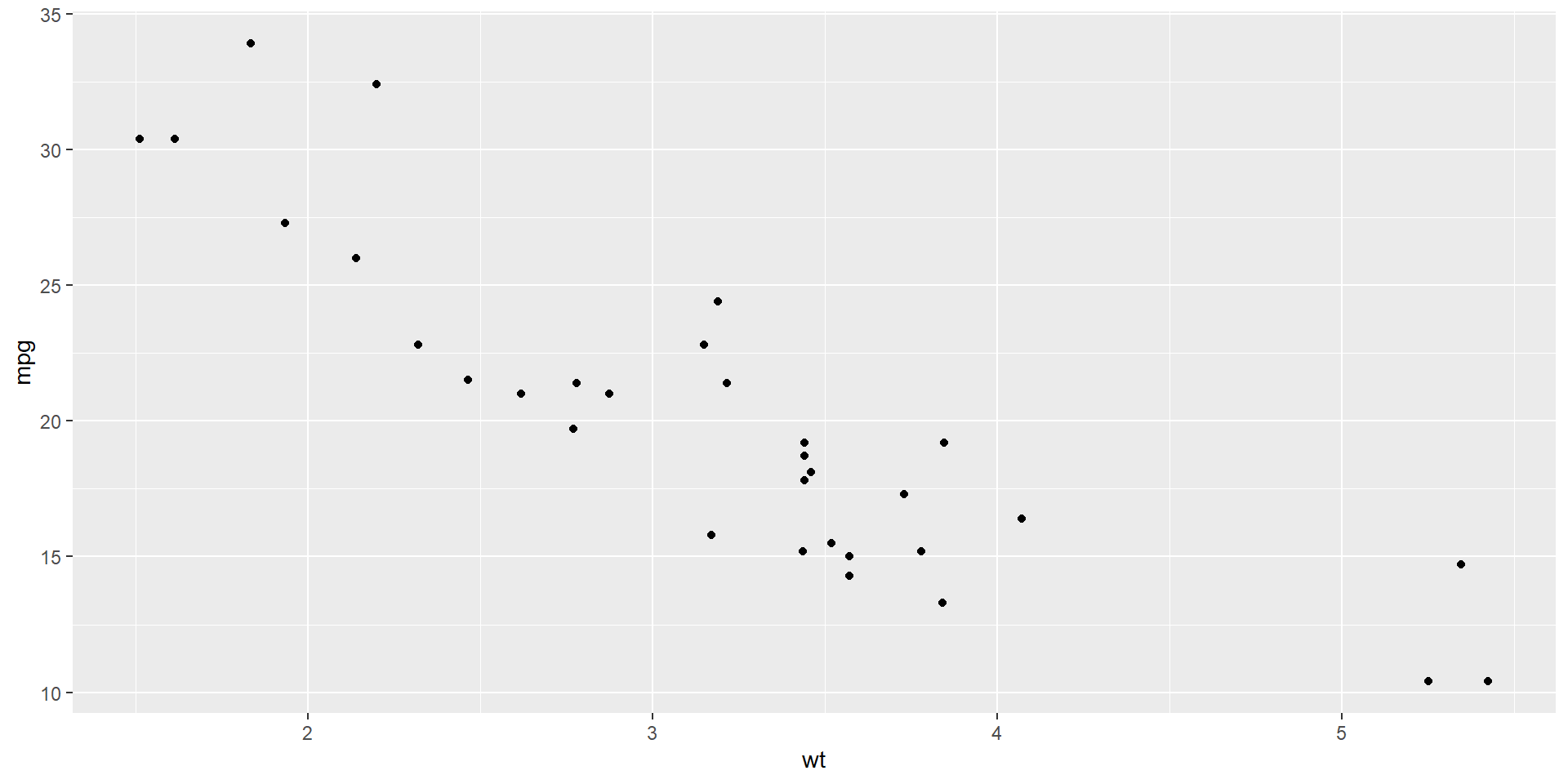

Scatterplot

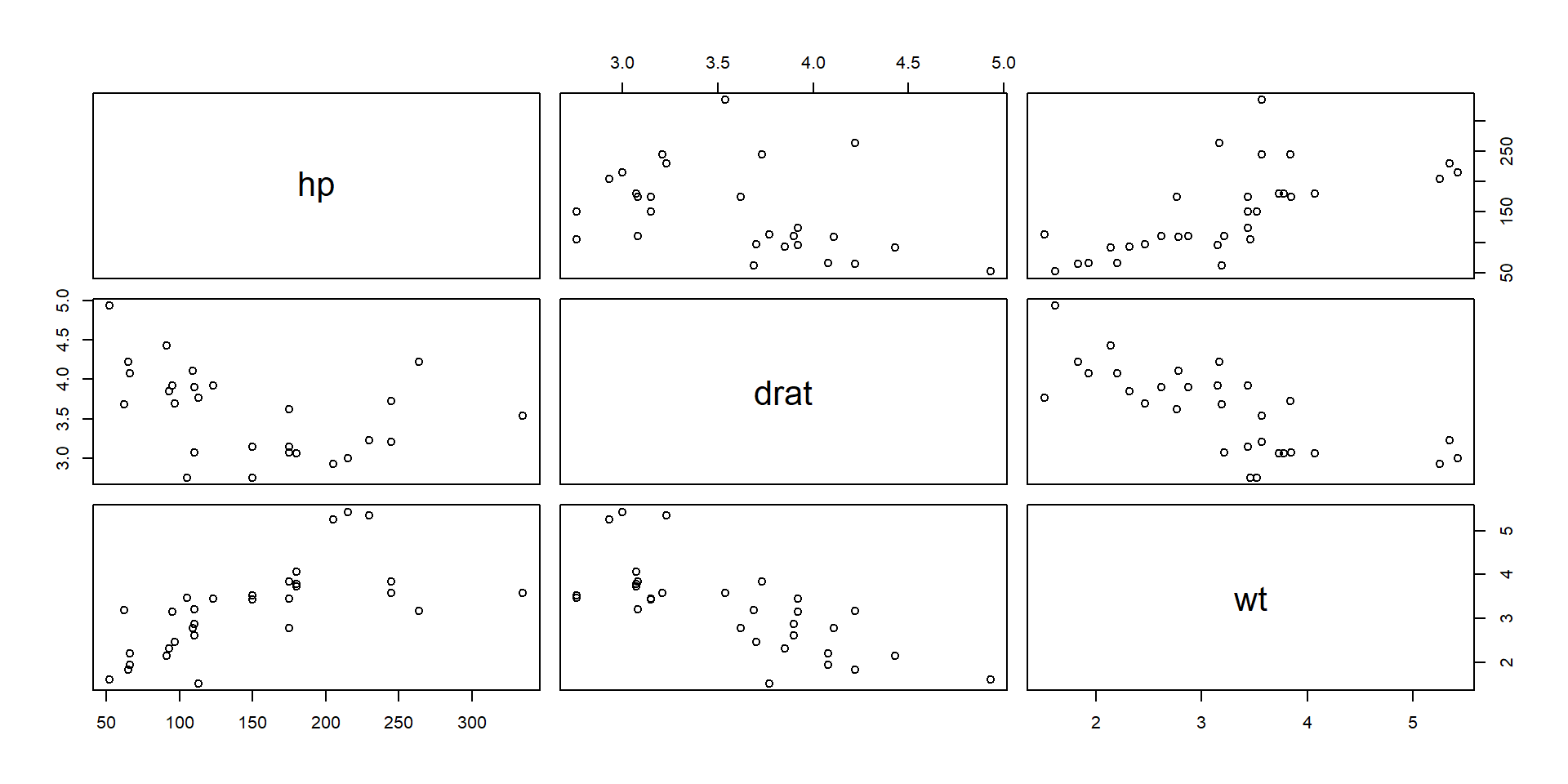

We can also get a scatter plot matrix to observe several plots at once.

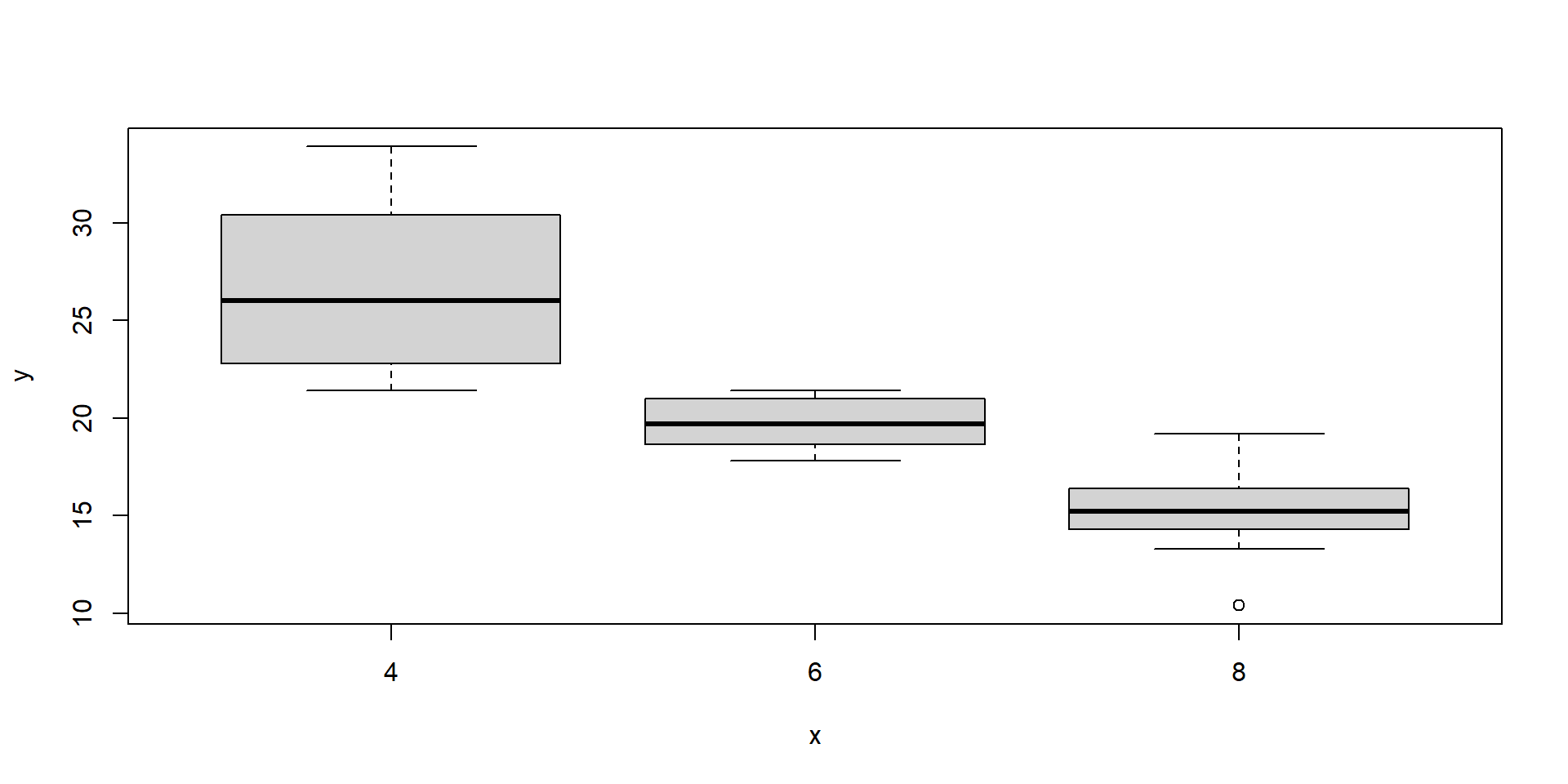

Continuous and categorical variables

Statistical inference

In statistical inference we are interested in learning some quantity representing some feature or parameter of a population. Population is large and we cannot examine all the values in the population directly. Therefore, to learn about the population parameter we take samples from the population and use the information in the samples to draw conclusions about the population.

Population parameters are fixed values. We rarely know the parameter values because it is often difficult to obtain measures from the entire population.

Sample statistics are quantities computed from the values in the sample. They are random variables because they vary from sample to sample.

We use sample statistics to draw conclusions about unknown population parameters.

Example

Suppose we are interested in knowing the mean height of the population living in Saudi Arabia. Population is large and we cannot measure the height of everybody directly. We can get an estimate of the mean height by taking sample of say, 5000 people, and measuring their heights. We can provide point estimates and interval estimates for population parameters.

Population mean is denoted by \(\mu\) and it is unknown

Sample size is \(n = 5000\)

Heights of people in the sample: \((x_1, x_2, \ldots, x_{5000})\)

Point estimate = estimate that specifies a single value of the population.

Sample statistic is the average heights of people in the sample: \(\bar x = \sum x_i/n\)

Interval estimate = estimate that specifies a range of plausible values for the population parameter. We are 95% confident the population mean \(\mu\) is within \((a, b)\)

Sampling distributions of sample statistics

A sample statistic is a random variable because it varies from sample to sample. The distribution of a sample statistic is called sampling distribution.

The sampling distribution of a sample statistic has mean approximately equal to the population mean and standard deviation called standard error (SE).

The Central Limit Theorem (CLT)

CLT states that for any given population distribution with mean \(\mu\) and standard deviation \(\sigma\), if we randomly take sufficiently large (\(n \geq 30\)) random samples from the population with replacement, the sampling distribution of the sample mean \(\bar X\) is normally distributed with mean \(\mu\) and standard error SE = \(\sigma/\sqrt{n}\).

\[\bar X \sim N\left(\mu, \frac{\sigma}{\sqrt{n}}\right)\]

Example

The mean height of a population is 160cm with standard deviation equal to 10. If we sample 50 people, what is the probability that the average height of the sample is greater than 170cm?

Solution

\(\mu_{\bar X} = \mu = 160\)

\(\sigma_{\bar X} = \sigma/\sqrt{n} = 10/\sqrt{50}\)

\(\bar X \sim N(\mu = 160, \sigma = 10/\sqrt{50})\)

The probability that the average height of the sample is greater than 170cm is \(P(\bar X > 170)\).

Simulation Central Limit Theorem

CLT states that for any given population distribution with mean \(\mu\) and standard deviation \(\sigma\), if we randomly take sufficiently large (\(n \geq 30\)) random samples from the population with replacement, the sampling distribution of the sample mean \(\bar X\) is normally distributed with mean \(\mu\) and standard error SE = \(\sigma/\sqrt{n}\).

\[\bar X \sim N\left(\mu, \frac{\sigma}{\sqrt{n}}\right)\]

Illustration of the Central Limit Theorem (CLT) by means of a simulation:

Confidence intervals

Confidence interval

A \((c \times 100)\)% confidence interval for a population parameter based on sample observations is an interval \((A, B)\) such that we are \((c \times 100)\)% confident that the population parameter is within the interval.

When we use a different sample, we obtain a different confidence interval

If we take many samples from the population and calculate 95% CIs for each sample, then 95% of these CIs will contain the true population parameter.

Confidence level \(c = 1 - \alpha\). Significance level \(\alpha = 1- c\)

95% CI. Confidence level \(c = 0.95\). Significance level \(\alpha = 0.05\)

90% CI. Confidence level \(c = 0.90\). Significance level \(\alpha = 0.10\)

99% CI. Confidence level \(c = 0.99\). Significance level \(\alpha = 0.01\)

Construct a confidence interval

A \((c \times 100)\)% confidence interval for a population parameter is \[\mbox{sample statistic $\pm$ critical value $\times$ SE}\]

Sample statistic used to estimate the population parameter.

Sample mean (\(\bar X\)) to estimate the population mean (\(\mu\))

Sample proportion (\(\hat P\)) to estimate the population proportion (\(p\))Standard error SE is the standard deviation of the sampling distribution of the sample statistic

Critical value (\(z^*\), \(t^*(n-1)\)).

Measures the number of SE to be added and subtracted from the sample statistic to achieve the desired confidence level

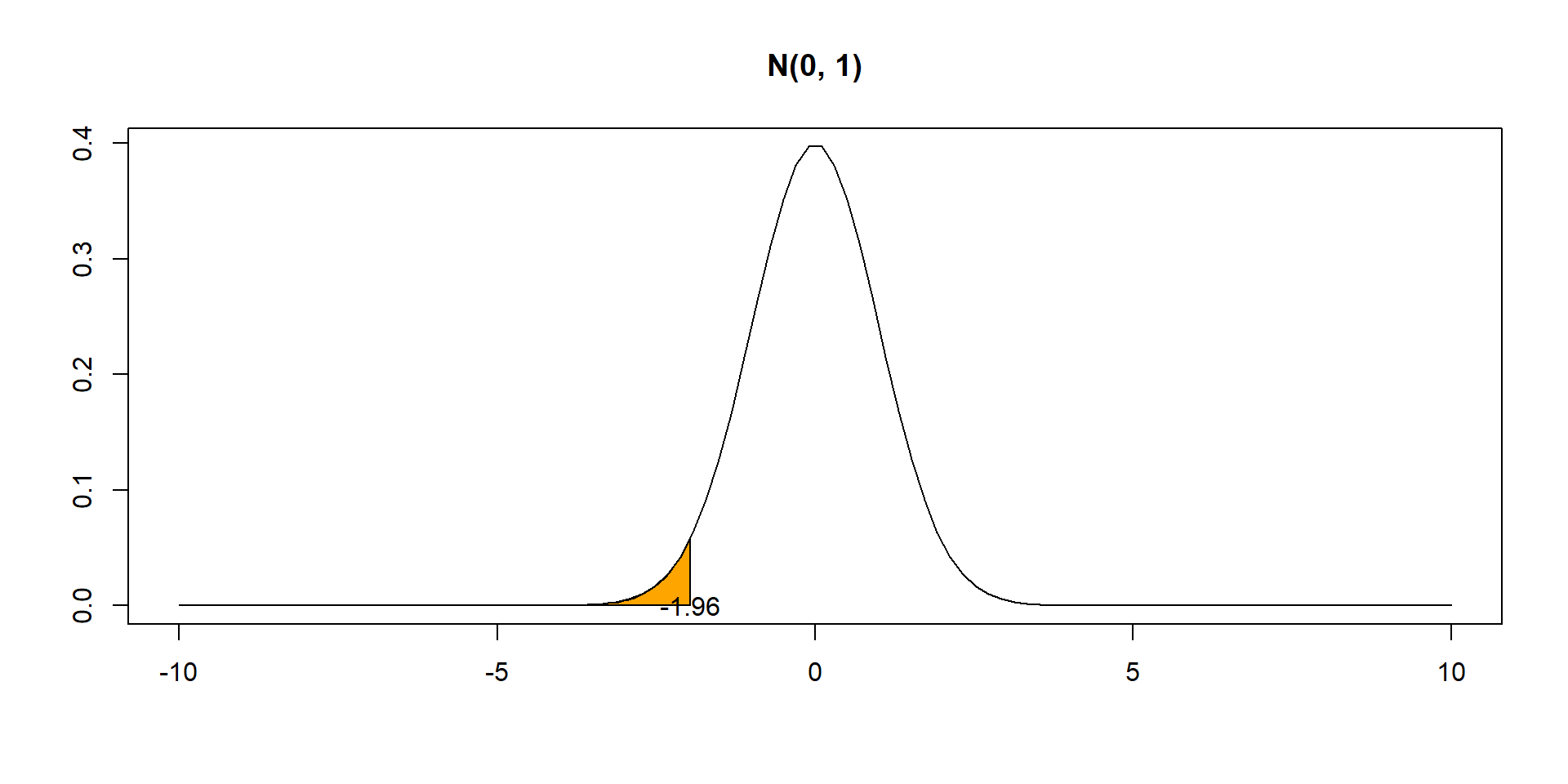

Quantiles

\[X \sim N(\mu = 0, \sigma = 1)\]

Confidence interval for a proportion

Confidence interval for a proportion

\((1-\alpha) \times 100\)% confidence interval for the population proportion \(p\) \[\hat P \pm z^* \times SE = \hat P \pm z^* \times \sqrt{\hat P(1-\hat P)/n}\]

Assumptions:

- Sample observations are independent (random sample)

- In the sample, there are at least 10 successes and 10 failures

\(z^*\): value such that \(c=1-\alpha\) of the probability in the N(0,1) falls between \(-z^*\) and \(z^*\).

Value such that \(\alpha/2\) of the probability in the \(N(0,1)\) is below \(z^*\)

Example. Confidence interval for a proportion

In a random sample of 40 students, 24 said they bought a copy of the textbook. Estimate the proportion of all students who bought the book and give a 95% confidence interval.

Solution \[\hat p \pm z^* \times SE = \hat p \pm z^* \times \sqrt{\hat p(1-\hat p)/n}\] Assumptions:

- Sample observations independent (random sample)

- At least 10 successes (\(24 \geq 10\)) and 10 failures (\(40-24=16 \geq 10\))

Sample statistic: \(\hat p = 24/40 = 0.6\)

Critical value: \(z^* = -1.96\)

(\(\alpha/2\) of the probability in \(N(0,1)\) is below \(z^*\))

\(\hat p \pm z^* \times SE = \hat p \pm z^* \times \sqrt{\hat p(1-\hat p)/n} = 0.6 \pm 1.96 \times \sqrt{ 0.6(1-0.6)/40} =\) \(= (0.448, 0.752)\)

An estimate of the proportion of students who bought the book is 60%.

We are 95% confident that the proportion of students is between 44.8% and 75.2%.

Confidence interval for a proportion

\((1-\alpha) \times 100\)% confidence interval for the population proportion \(p\) \[\hat P \pm z^* \times SE = \hat P \pm z^* \times \sqrt{\hat P(1-\hat P)/n}\]

Assumptions:

- Sample observations are independent (random sample)

- In the sample, there are at least 10 successes and 10 failures

\(z^*\): value such that \(c=1-\alpha\) of the probability in the N(0,1) falls between \(-z^*\) and \(z^*\).

Value such that \(\alpha/2\) of the probability in the \(N(0,1)\) is below \(z^*\)

\(\hat P\) is the proportion of successes in \(n\) trials. So \(\hat P\) is equal to \(Y/n\) where \(Y \sim Binomial(n, p)\).

\(Y\) can be expressed as the sum of \(n\) independent, identically distributed Bernoulli random variables, \[Y = \sum_{i=1}^n X_i\ \mbox{with } X_i \sim Ber(p),\ E[X_i]=p,\ Var[X_i]=p(1-p).\]

The Central Limit Theorem says that when we collect a sufficiently large sample of \(n\) independent observations, \(\bar X \sim N(\mu, \frac{\sigma}{\sqrt{n}})\). Thus, the sampling distribution of the sample proportion \(\hat P\) \[\hat P = \frac{\sum_{i=1}^n X_i}{n} \sim N\left(p, \mbox{SE = } \sqrt{\frac{p(1-p)}{n}}\right). \mbox{ We standardize } \hat P:\ \ \ Z = \frac{\hat P - p}{\sqrt{p(1-p)/n}} \sim N(0, 1)\]

\[P(-1.96 \leq \frac{\hat P - p}{\sqrt{\hat P(1-\hat P)/n}} \leq 1.96) = 0.95\] The probability is 95% that \(\hat P\) is within 1.96 standard errors of its mean

\[P(\hat P -1.96 \times {\sqrt{\hat P(1-\hat P)/n}} \leq p \leq \hat P + 1.96 \times {\sqrt{\hat P(1-\hat P)/n}}) = 0.95\]

A \((1-\alpha) \times 100\)% confidence interval for \(p\) is \[(\hat P -z^* \times {\sqrt{\hat P(1-\hat P)/n}},\ \hat P + z^* \times {\sqrt{\hat P(1-\hat P)/n}}). \mbox{ $z^*$ quantile $\alpha/2$}\]

\(z^*\) quantile \(\alpha/2\), value such \(\alpha/2\) of the probability in the N(0,1) distribution is below \(z^*\).

Example. Confidence interval for a proportion

A random sample of 826 people living in UK was surveyed to better understand their political preferences. 70% of the responses supported the political party A. Calculate a 95% confidence interval for the proportion of people that support the political party A.

Solution \[\hat p \pm z^* \times SE = \hat p \pm z^* \times \sqrt{\hat p(1-\hat p)/n}\]

Assumptions:

- Observations are independent (they are from a random sample)

- In the sample at least 10 support (\(n \hat p = 826 \times 0.70 = 578 \geq 10\) and at least 10 do not support (\(n(1- \hat p) = 826 \times (1-0.70) = 248 \geq 10\))

Sample statistic: \(\hat p = 24/40 = 0.70\)

Critical value: \(z^* = -1.96\)

(\(\alpha/2=0.05/2\) of the probability in \(N(0,1)\) is below \(z^*\))

\(\hat p \pm z^* \times SE = \hat p \pm z^* \times \sqrt{\hat p(1-\hat p)/n} = 0.70 \pm 1.96 \times 0.016 = (0.669, 0.731)\)

We are 95% confident that the true proportion of people who support political party A is between 0.669 and 0.731.

Confidence interval for a mean

t distribution

- Symmetric and bell-shaped like the normal distribution.

- Mean 0, Heavier tails than the normal distribution. Observations are more likely to fall beyond two standard deviations from the mean than under the normal distribution.

- The t-distribution has a parameter called degrees of freedom (df) that describes its form. When the df increases, the t-distribution approaches the standard normal N(0, 1)

Confidence interval for a mean

\((1-\alpha) \times 100\)% confidence interval for the population mean \(\mu\) \[\bar X \pm t_{n-1}^* \times SE = \bar X \pm t_{n-1}^* \times \frac{S}{\sqrt{n}}\] \(t^*_{n-1}\) value such that \(\alpha/2\) of the probability in \(t(n-1)\) is below \(t^*_{n-1}\)

Assumptions:

- Sample observations are independent.

- Sample observations from a normally distributed population or \(n \geq 30\)

When the population standard deviation is known:

\[\bar X \pm z^* \times SE = \bar X \pm z^* \times \frac{\sigma}{\sqrt{n}}\]

\(z^*\) value such that \(\alpha/2\) of the probability in the N(0,1) is below \(z^*\)

Example. Confidence interval for a mean

In a class survey, students are asked how many hours they sleep per night. In a sample of 52 students, the mean was 5.77 hours with a standard deviation of 1.572 hours. Construct a 95% confidence interval for the mean number of hours slept per night in the population from which this sample was drawn.

Solution

\(\sigma\) is unknown, we use \(s\) and a t distribution with \(n-1=52-1\) df \[\bar x \pm t^*_{51} \times SE = \bar x - t^*_{51} \times \frac{s}{\sqrt{n}}\]

Assumptions:

- We assume data are independent

- \(n \geq 30\)

Sample statistic: \(\bar x = 5.77\)

Critical value: \(t^*_{51}= -2.01\)

(\(\alpha/2=0.05/2\) of the probability in t(51) is below \(t^*_{51}\))

\(\bar x \pm t^*_{51} \times SE = \bar x \pm t^*_{51} \times \frac{s}{\sqrt{n}} = 5.77 \pm 2.01 \times \frac{1.572}{\sqrt{52}} = (5.33, 6.21)\).

We are 95% confident the population mean is between 5.33 and 6.21 hours.

Confidence interval for a mean

\((1-\alpha) \times 100\)% confidence interval for the population mean \(\mu\) \[\bar X \pm t_{n-1}^* \times SE = \bar X \pm t_{n-1}^* \times \frac{S}{\sqrt{n}}\] \(t^*_{n-1}\) value such that \(\alpha/2\) of the probability in \(t(n-1)\) is below \(t^*_{n-1}\)

Assumptions:

- Sample observations are independent.

- Sample observations from a normally distributed population or \(n \geq 30\)

When the population standard deviation is known:

\[\bar X \pm z^* \times SE = \bar X \pm z^* \times \frac{\sigma}{\sqrt{n}}\]

\(z^*\) value such that \(\alpha/2\) of the probability in the N(0,1) is below \(z^*\)

Confidence interval for a mean when \(\sigma\) is known

\[\mbox{Sampling distribution of } \bar X: \ \ \bar X \sim N\left(\mu, SE = \frac{\sigma}{\sqrt{n}}\right)\]

\[\mbox{We standardize } \bar X: \ \ Z = \frac{\bar X - \mu}{\sigma/\sqrt{n}} \sim N(0, 1)\]

\[P(-1.96 \leq \frac{\bar X - \mu}{\sigma/\sqrt{n}} \leq 1.96) = 0.95\]

The probability is about 95% that \(\bar X\) is within 1.96 standard errors of its mean

\[P(\bar X -1.96 \times \frac{\sigma}{\sqrt{n}} \leq \ \mu \leq \bar X + 1.96 \times \frac{\sigma}{\sqrt{n}}) = 0.95\] A \((1-\alpha) \times 100\)% confidence interval for \(\mu\) is \[(\bar X -z^* \times \frac{\sigma}{\sqrt{n}} , \bar X + z^* \times \frac{\sigma}{\sqrt{n}})\]

\(z^*\) quantile \(\alpha/2\), value such \(\alpha/2\) of the probability in the N(0,1) distribution is below \(z^*\)

Confidence interval for a mean when \(\sigma\) is not known

When population standard deviation is not known, we approximate it with the sample standard deviation and use a \(t\) distribution with \(n-1\) degrees of freedom.

\[SE = \frac{\sigma}{\sqrt{n}} \approx \frac{S}{\sqrt{n}}\]

\[T = \frac{\bar X - \mu}{S/\sqrt{n}} \sim t(n-1)\]

A \((1-\alpha) \times 100\)% confidence interval for \(\mu\) is \[(\bar X - t^*_{n-1} \frac{S}{\sqrt{n}},\ \bar X + t^*_{n-1} \frac{S}{\sqrt{n}})\]

\(t^*_{n-1}\) quantile \(\alpha/2\), value such \(\alpha/2\) of the probability in the \(t(n-1)\) distribution is below \(t^*_{n-1}\)

Example. Confidence interval for a mean

Elevated mercury concentrations are an important problem for both dolphins and other animals who occasionally eat them. Calculate a 95% CI for the average mercury content in dolphins using a sample of 19 dolphins in Japan. In the sample, \(n=19\), \(\bar x = 4.4\), \(s = 2.3\), minimum \(= 1.7\) and maximum \(= 9.2\) \(\mu\)g/wet gram (micrograms of mercury per wet gram of muscle).

Solution \(\sigma\) is unknown, we use \(s\) and a t distribution with \(n-1=19-1\) df \[\bar x \pm t^*_{18} \times SE = \bar x \pm t^*_{18} \times \frac{s}{\sqrt{n}}\]

Assumptions:

- Independence seems reasonable because observations come from a random sample.

- Normality seems reasonable because there are not clear outliers (all observations are within 2.5 standard deviations of the mean).

Sample statistic: \(\bar x = 4.4\)

Critical value: \(t^*_{18} = -2.10\)

(\(\alpha/2=0.05/2\) of the probability in the t(18) distribution is below \(t^*_{18}\))

\(\bar x \pm t^*_{18} \times SE = \bar x \pm t^*_{18} \times \frac{s}{\sqrt{n}} = 4.4 \pm 2.10 \times \frac{2.3}{\sqrt{19}} = (3.29, 5.51)\)

We are 95% confident the average mercury content in dolphins is between 3.29 and 5.51 \(\mu\)g/wet gram.

Interpretation of a confidence interval

The interpretation of a 95% CI constructed using a sample of size \(n\) is that if we take many samples of size \(n\) from the population, and calculate the 95% CIs for each sample, then we would expect 95% of these CI to contain the value of the population parameter

Hypothesis tests

Hypothesis tests

Hypothesis testing is a procedure to determine whether a claim about a population parameter is reasonable.

Currently accepted value of population mean age is 18.

Sample mean is 50, far from the currently accepted value 18.

So we reject the current belief that mean age is 18. The data provide evidence to say mean age is greater than 18.

Currently accepted value of population mean age is 18.

Sample mean is 20, close to the currently accepted value 18.

So we fail to reject the current belief that mean age is 18. The data do not provide enough evidence to say mean age is greater than 18.

Hypothesis tests

Hypothesis testing is a procedure to determine whether a claim about a population parameter is reasonable.

- Research question: Does a new drug reduce cholesterol?

The alternative or research hypothesis is the alternative to the currently accepted value for the population parameter

- \(H_1\): The new drug reduces cholesterol

The null hypothesis represents “no difference” (currently accepted value of the population parameter)

- \(H_0\): The new drug has no effect

To test a hypothesis, we select a sample from the population and use measurements from the sample and probability theory to determine how well the data and the null hypothesis agree.

We want to show the alternative hypothesis is reasonable (by showing there is evidence to reject the null hypothesis).

Example

Research question we are trying to answer

Does a new drug reduce cholesterol?Alternative hypothesis (investigator’s belief)

\(H_1\): The new drug reduces cholesterolNull hypothesis (no difference, hypothesis to be rejected)

\(H_0\): The new drug has no effect

Let \(\mu_1\) and \(\mu_2\) be the cholesterol levels obtained with and without the drug, respectively.

\(H_0: \mu_1 = \mu_2\) (Null hypothesis)

\(H_1: \mu_1 < \mu_2\) (Alternative hypothesis)

Null and alternative hypothesis

| Null Hypothesis \(H_0\) | Alternative Hypothesis \(H_1\) |

|---|---|

| Assumed true until evidence indicates otherwise | We try to find evidence for the alternative hypothesis |

| “Nothing is going on” | Opposite of the null hypothesis |

| There is no relationship between variables being studied | There is relationship between variables being studied |

| An intervention does not make a difference/has no effect | An intervention makes a difference/has an effect |

Hypothesis are written in terms of population parameters.

- single mean (\(\mu\)), single proportion (\(p\)), difference between two independent means (\(\mu_1-\mu_2\)), diff between proportions (\(p_1-p_2\))

The null hypothesis \(H_0\) contains the equal sign (=)

Write the null and alternative hypothesis

Is the average monthly rent of a one-bedroom apartment in Madrid less than 800 Euros?

\(H_0\): \(\mu \geq 800\)

\(H_1\): \(\mu < 800\)

Do the majority of all university students own a pet?

\(H_0: p \leq 0.5\)

\(H_1: p > 0.5\)

In preschool, are the weights of boys and girls different?

\(H_0\):

\(H_1\):

In preschool, are the weights of boys and girls different?

\(H_0: \mu_b = \mu_g\)

\(H_1: \mu_b \neq \mu_g\)

Is the average intelligence quotient (IQ) score of all university students higher than 100?

\(H_0\):

\(H_1\):

Is the proportion of men who smoke cigarettes different from the proportion of women who smoke cigarettes in England?

\(H_0\):

\(H_1\):

Is the average intelligence quotient (IQ) score of all university students higher than 100?

\(H_0\): \(\mu \leq 100\)

\(H_1\): \(\mu > 100\)

Is the proportion of men who smoke cigarettes different from the proportion of women who smoke cigarettes in England?

\(H_0: p_1 = p_2\)

\(H_1: p_1 \neq p_2\)

Decisions in hypothesis testing

We make one of these two decisions:

Reject the null (if data provide evidence to disprove it)

Fail to reject the null (if data do not provide enough evidence to disprove it)

Failing to reject the null does not mean we accept the null. It is possible the data we have do not provide enough evidence against the null. But maybe we can collect additional data that provide evidence against the null.

A man goes to trial where he is being tried for a murder.

\(H_0\): Man is not guilty vs. \(H_1\): Man is guilty

It is possible the data we have do not provide enough evidence to reject the null and conclude the man is guilty. But we do not say he is innocent! We may collect additional data that provide evidence and then we can reject the null and say the man is guilty.

Errors in hypothesis testing

| \(H_0\) is True | \(H_0\) is False | |

|---|---|---|

| Reject null | Type I error | Correct |

| Fail to reject null | Correct | Type II error |

Type I error: Reject the null hypothesis when it is true

Type II error: Fail to reject the null hypothesis when it is false

Example

\(H_0\): patient is not pregnant

\(H_1\): patient is pregnant

- Type I error: Reject the null hypothesis when it is true.

Patient is not pregnant but we conclude he is pregnant. - Type II error: Fail to reject the null hypothesis when it is false.

Patient is pregnant but we conclude she is not pregnant.

Example

A man goes to trial where he is being tried for a murder.

\(H_0\): Man is not guilty

\(H_1\): Man is guilty

Type I error: Reject the null hypothesis when it is true.

Type II error: Fail to reject the null hypothesis when it is false.

Example

A man goes to trial where he is being tried for a murder.

\(H_0\): Man is not guilty

\(H_1\): Man is guilty

- Type I error: Reject the null hypothesis when it is true.

The man did not kill the person but was found guilty and was punished for a crime he did not really commit. - Type II error: Fail to reject the null hypothesis when it is false.

The man killed the person but was found not guilty and was not punished.

Significance level

- Before testing the hypothesis, we select the significance level of the test \(\alpha\).

The significance level is the maximum probability of incorrectly rejecting the null when it is true we are willing to tolerate. Typical \(\alpha\) values are 0.05 and 0.01.

\(\alpha\) = P(Type I Error) = P(reject \(H_0\) | \(H_0\) is true)

- If we choose \(\alpha = 0.05\), we have a maximum of 5% chance of incorrectly rejecting the null when it is true.

Steps in hypothesis testing

State the null and alternative hypotheses

Choose the significance level. Usually \(\alpha = 0.05\)

Calculate the test statistic using the sample observations \[\mbox{test statistic} = \frac{\mbox{sample statistic - null parameter}}{\mbox{standard error}}\]

Find the p-value for the observed data

(p-value is the probability of obtaining a test statistic as extreme as or more extreme than the one observed in the direction of the alternative, assuming the null is true)Make a decision and state a conclusion

If p-value < \(\alpha\), we reject the null

If p-value \(\geq \alpha\), we fail to reject the null

Example

A company manufacturing computer chips claims the defective rate is 5%. Let \(p\) denote the true defective probability. We use a sample of 1000 chips from the production to determine their claim is reasonable. The proportion of defectives in the sample is 8%.

1. State the null and alternative hypotheses

\(H_0: p = p_0\) (proportion of defective chips is 5%)

\(H_1: p > p_0\) (proportion of defective chips is greater than 5%)

where \(p_0=0.05\)

2. Choose the significance level

We use a significance level \(\alpha = 0.05\)

So the maximum probability of incorrectly rejecting the null when it is true we are willing to tolerate is 5%.

3. Calculate the test statistic

\[\mbox{test statistic} = \frac{\mbox{sample statistic - null parameter}}{\mbox{standard error}}\]

The test statistic is a value calculated from a sample that summarizes the characteristics of the sample and is used to determine whether to reject or fail to reject the null hypothesis.

The test statistic differs from sample to sample. The sampling distribution of the test statistic under the null must be known so we can compare the results observed to the results expected from the null (e.g., test statistics with normal or t distributions).

Sampling distribution of the test statistic under the null hypothesis.

The value of the test statistic calculated with the sample observations is

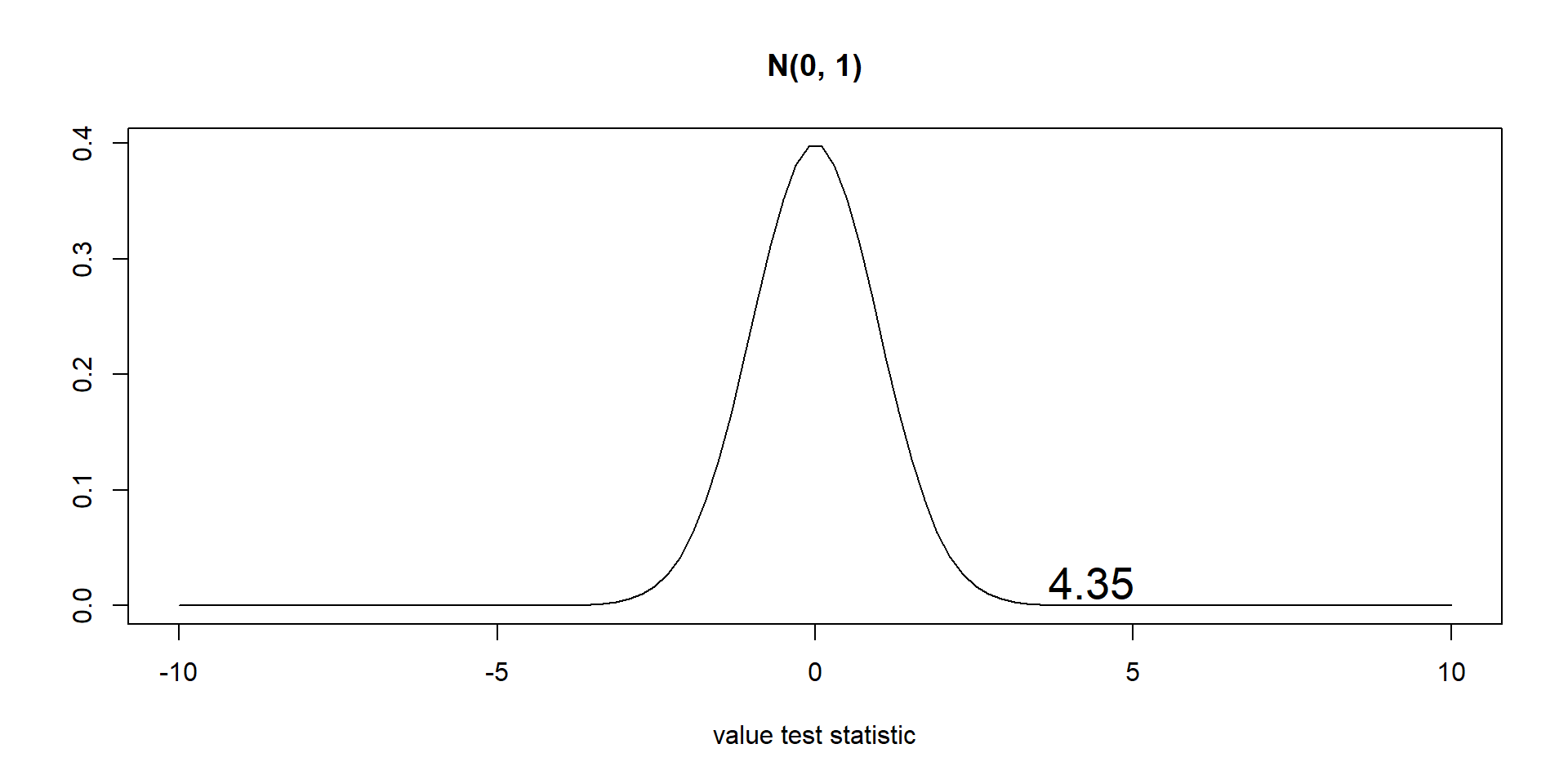

\(\displaystyle{z_{obs} = \frac{0.08-0.05}{\sqrt{0.05(1-0.05)/1000}}}\) = 4.35

4. Find the p-value

We reject the null if the observed value of the test statistic is so extreme that it is unlikely to occur if the null is true (unlikely to occur is given by the significance level \(\alpha\)). Otherwise we fail to reject the null.

The p-value is the probability of obtaining a test statistic as extreme as or more extreme than the one observed in the direction of the alternative hypothesis, assuming the null hypothesis is true.

To calculate the p-value we compute the test statistic value using the sample data, and calculate the area under the test statistic distribution curve beyond the test statistic value.

5. Compare p-value and \(\alpha\) and make a decision

We compare the p-value to the significance level \(\alpha\) and decide whether to reject or fail to reject the null.

If p-value is small (p-value \(<\) \(\alpha\)) we had little chance of getting our data if the null hypothesis is true. So we reject the null. We conclude there is evidence against the null hypothesis.

If p-value is big (p-value \(\geq\) \(\alpha\)) there is reasonable chance of getting our data if the null hypothesis is true. So we fail to reject the null. We conclude there is not sufficient evidence against the null hypothesis.

1. State the null and alternative hypotheses

\(H_0: p = p_0\) (proportion of defective chips is 5%)

\(H_1: p > p_0\) (proportion of defective chips is greater than 5%)

where \(p_0=0.05\)

2. Choose the significance level

We use a significance level \(\alpha = 0.05\)

So the maximum probability of incorrectly rejecting the null when it is true we are willing to tolerate is 5%.

3. Calculate the test statistic

Sample statistic is proportion of defective chips in the sample (\(\hat P\)).

Test statistic \[Z = \frac{\hat P - p_0}{\sqrt{\frac{p_0(1-p_0)}{n}}} \sim N(0,1)\]

The test statistic \(Z\) follows a N(0, 1) distribution under the null hypothesis (this holds if we have independent observations and the number of successes and failures are both greater than 10).

\(z_{obs} = \frac{0.08-0.05}{\sqrt{0.05(1-0.05)/1000}}\) = 4.35.

4. Find the p-value

p-value is the area under the N(0,1) curve beyond the test statistic observed in the direction of the alternative hypothesis

p-value = \(P(Z > 4.35) \approx 0\)

5. Make a decision and state a conclusion

p-value is approximately 0 and \(\alpha=0.05\)

p-value < \(\alpha\) so we reject the null, data provide evidence to say the population proportion of defective chips is greater than 5%.

One-sided and two-sided tests

One-sided (or one-tailed) test

The alternative hypothesis specifies that the population parameter lies entirely above or below the value specified in \(H_0\)

\(H_0: \mu=100\)

\(H_1: \mu>100\)

This is tested in the same way as

\(H_0: \mu\leq100\)

\(H_1: \mu>100\)

(if we conclude \(\mu>100\), we also conclude \(\mu>90\), \(\mu>80\), etc)

Two-sided (or two-tailed) test

The alternative hypothesis specifies that the parameter can lie on either side of the value specified by \(H_0\)

\(H_0: \mu=100\) vs. \(H_1: \mu \neq 100\)

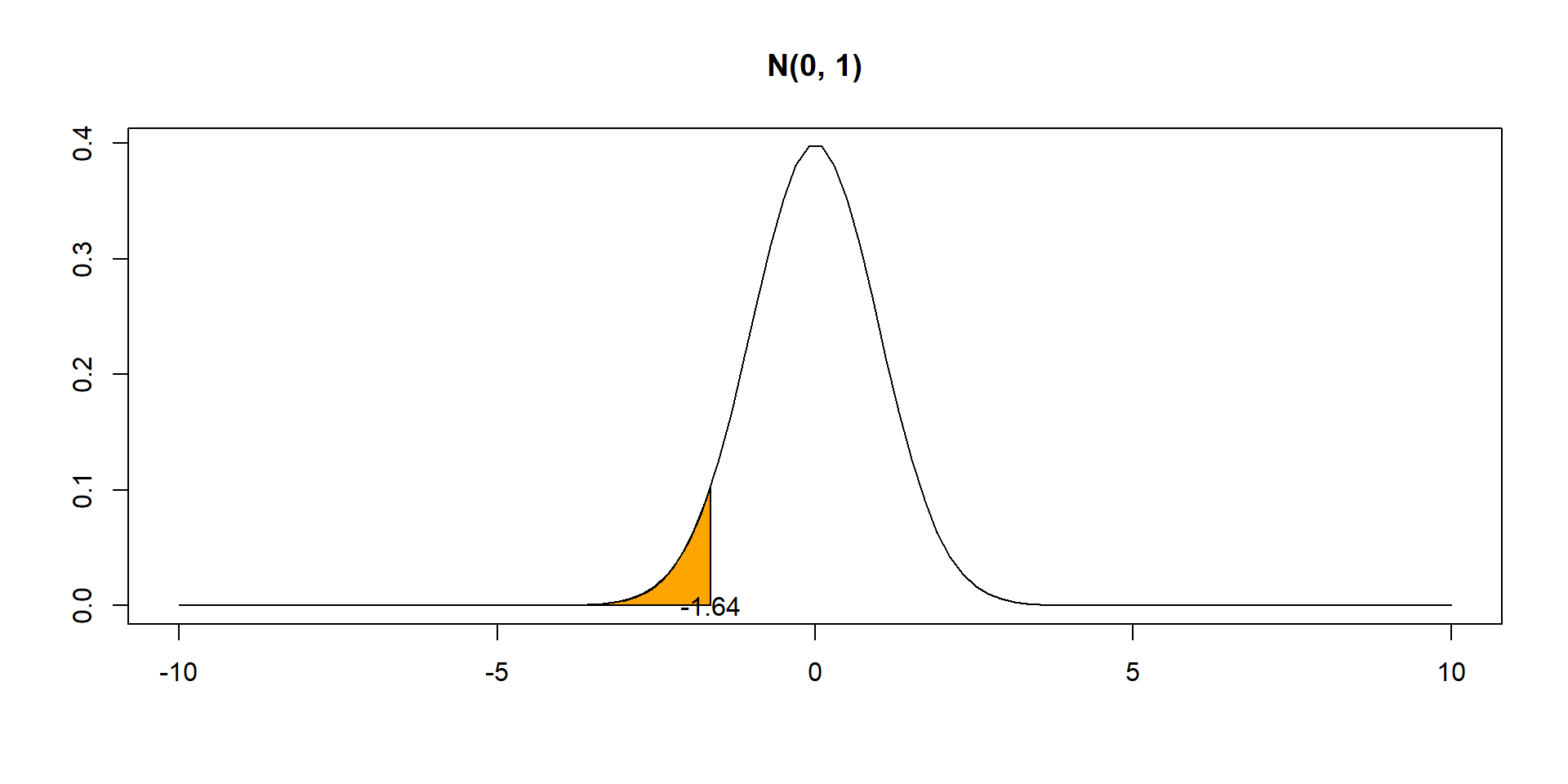

p-values in one- and two- tailed tests

Left-tailed tests: p-value is the area under the test statistic distribution curve to the left of the test statistic

Right-tailed tests: p-value is the area under the test statistic distribution curve to the right of the test statistic

Two-tailed tests: we use the symmetry of the test distribution curve. Calculate the p-value for a one-sided test and double it

Exercises hypothesis testing

ANOVA

ANOVA

ANOVA is a statistical procedure to test if the means of two or more groups are significantly different from each other.

\(H_0: \mu_1 = \mu_2 = \ldots = \mu_k\)

\(H_1:\) not all \(\mu_i\) are equal

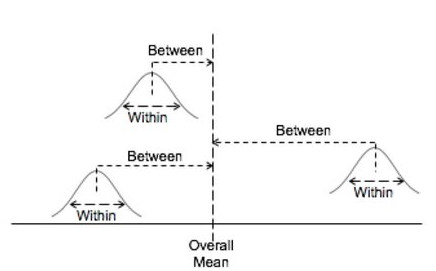

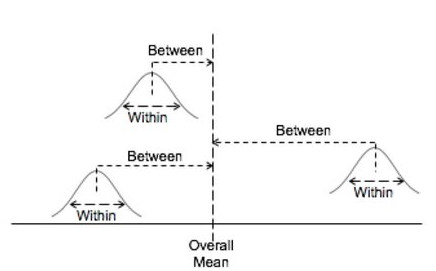

ANOVA

ANOVA separates the total variation in the data into variation between groups and variation within groups.

ANOVA uses a test statistic called F statistic that compares the variability between groups to the variability within groups.

\[\mbox{F = between groups variability / within groups variability}\] If the variability between groups dominates the variability within groups, we find evidence to suggest population means are different.

ANOVA

F distribution

The F distribution F(df1, df2) depends on two parameters:

degrees of freedom df1 and degrees of freedom df2

- The F-distribution is \(\geq 0\) and skewed to the right

- Shape changes with df1 and df2

Example

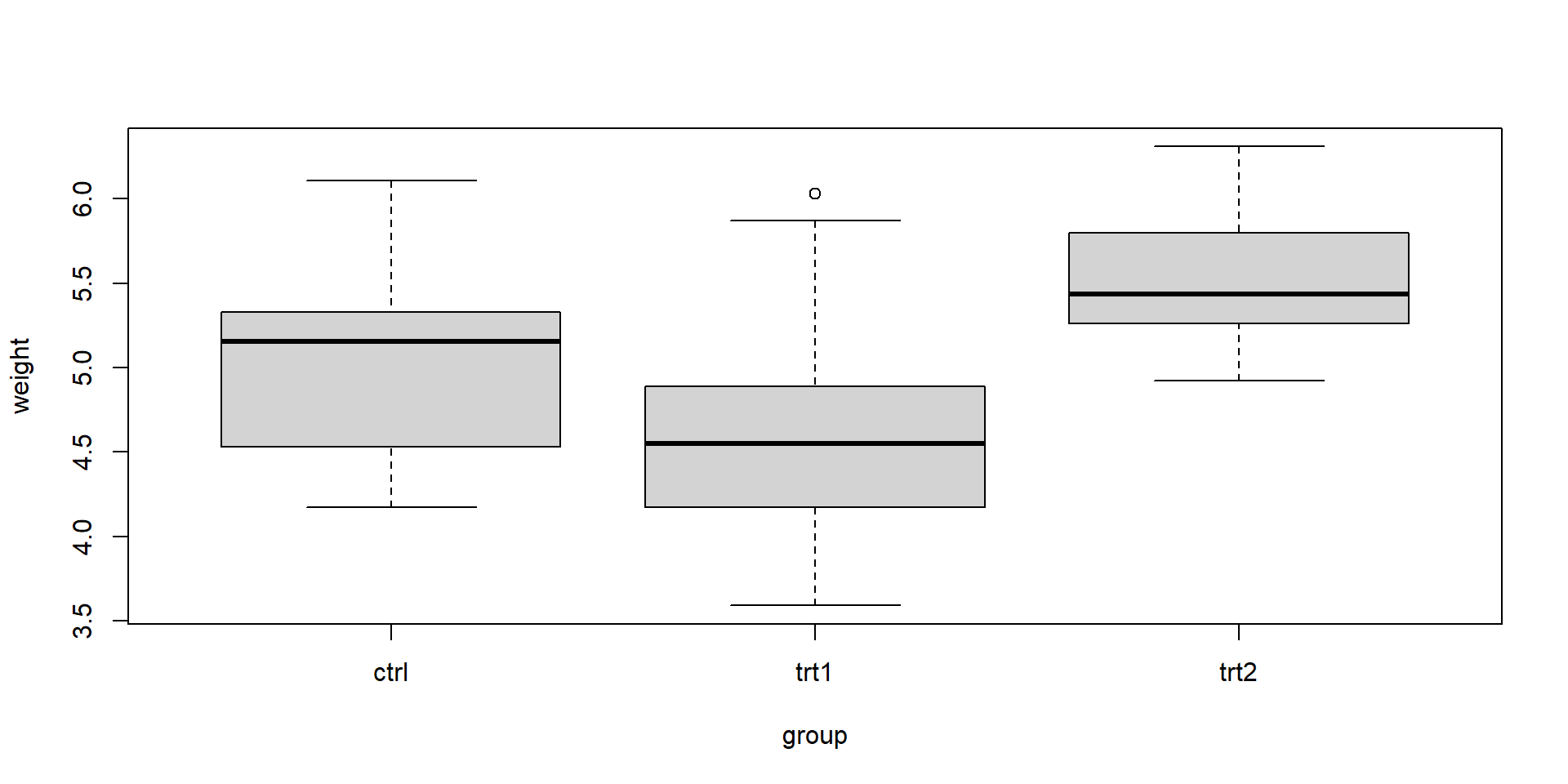

Consider an experiment to compare plant yields under a control and two different treatment conditions. We collect the dried weight of plants for the control group and the two treatments.

We use ANOVA to test whether the average plant weights for each treatment are statistically different from each other.

\(H_0: \mu_1 = \mu_2 = \mu_3\)

\(H_1:\) not all \(\mu_i\) are equal

Data

The groups are control, treatment 1 and treatment 2.

Group.1 x.length x.mean x.sd

1 ctrl 10.0000000 5.0320000 0.5830914

2 trt1 10.0000000 4.6610000 0.7936757

3 trt2 10.0000000 5.5260000 0.4425733

We see mean weights are different between groups. Are they statistically different or just different due to sampling variability?

Boxplot

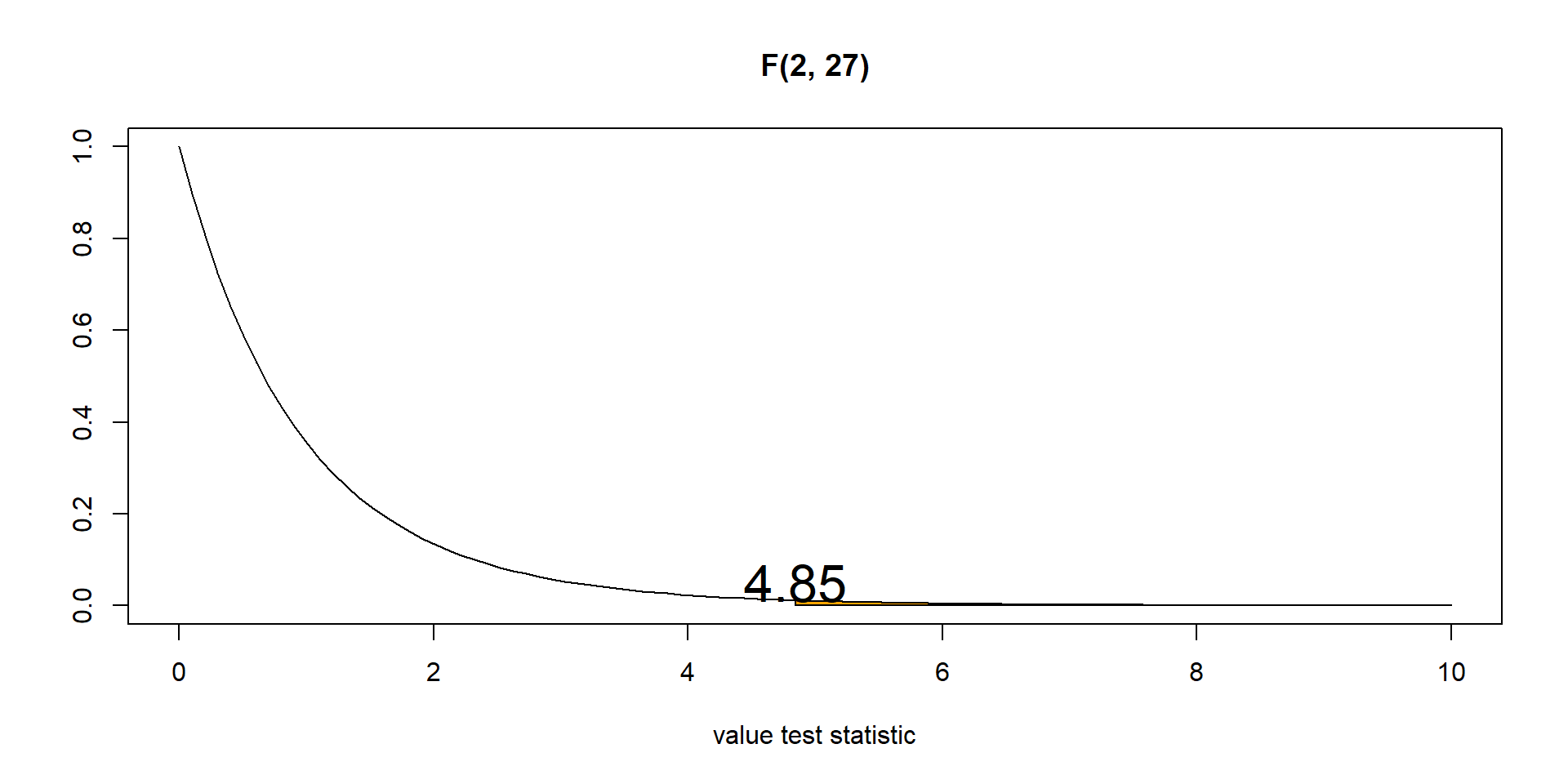

ANOVA

We use ANOVA to test if the weight means for each treatment are statistically different or are just different due to sampling variability.

Null and alternative hypotheses

\(H_0: \mu_1 = \mu_2 = \mu_3\)

\(H_1:\) not all \(\mu_i\) are equalWe set the significance level

\(\alpha = 0.05\) (maximum probability of incorrectly rejecting the null when is true we are willing to tolerate)F statistic compares variation between groups to variation within groups. \[F = \frac{\mbox{between groups variability}}{\mbox{within groups variability}} \sim F(k-1, n-k) = F(3-1, 30-3)\] \(k-1\): number of groups - 1

\(n-k\): number of observations - number of groups

aov()

We conduct ANOVA with the aov() function.

We get F statistic = 4.85 and p-value = 0.016.

\(F = \frac{\mbox{between groups variability}}{\mbox{within groups variability}} \sim F(k-1, n-k) = F(2, 27)\) \(F_{obs} = 4.85\)

- The p-value is the probability of obtaining an F-statistic value equal or more extreme than the observed, assuming the null is true. p-value is the area under the F(2,27) curve to the right of the F statistic 4.85. p-value = 0.016.

- p-value \(< \alpha\) (0.016 < 0.05) , so we reject the null. There is evidence that at least two of the group means are different.

ANOVA table

| Source | SS | df | MS | F | P |

|---|---|---|---|---|---|

| Between | SSB | k-1 | MSB=SSB/(k-1) | MSB/MSW | P |

| Within | SSW | n-k | MSW=SSW/(n-k) | ||

| Total | SST | n-1 |

SS: Sum of Squares (Sum of Squares of the deviations)

\(\mbox{SST = SSB + SSW}\)

- SSB = \(\sum_k n_k(\bar x_k-\bar x)^2\) variation BETWEEN groups.

- SSW = \(\sum_k \sum_i (x_{ik}-\bar x_{k})^2\) variation WITHIN the groups.

- SST = \(\sum_k \sum_i (x_{ik}-\bar x)^2\) TOTAL variation in the data from the grand mean of all groups combined (\(\bar x = \frac{\sum_k \sum_i x_{ik}}{n}\))

\(k\) number of groups, \(n\) total sample size (all groups combined),

\(n_k\) sample size of group \(k\), \(\bar x_k\) sample mean of group \(k\)

ANOVA table

| Source | SS | df | MS | F | P |

|---|---|---|---|---|---|

| Between | SSB | k-1 | MSB=SSB/(k-1) | MSB/MSW | P |

| Within | SSW | n-k | MSW=SSW/(n-k) | ||

| Total | SST | n-1 |

df: Degrees of freedom

\(n-1 = (k-1)+(n-k)\)

- Degrees of freedom of SSB = \(k-1\). Variation of the \(k\) group means about the overall mean

- Degrees of freedom of SSW = \(n-k\). Variation of the \(n\) observations about \(k\) group means

- Degrees of freedom of SST = \(n-1\). Variation of all \(n\) observations about the overall mean

ANOVA table

| Source | SS | df | MS | F | P |

|---|---|---|---|---|---|

| Between | SSB | k-1 | MSB=SSB/(k-1) | MSB/MSW | P |

| Within | SSW | n-k | MSW=SSW/(n-k) | ||

| Total | SST | n-1 |

SS: Sum of Squares (Sum of Squares of the deviations)

\(\mbox{SST = SSB + SSW}\)

df: Degrees of freedom

\(n-1 = (k-1)+(n-k)\)

Mean Squares MS = SS/df

F-statistic = MSB/MSW

\(\sim\) F(num groups - 1, num observ - num groups) = F(k-1, n-k)

P: p-value = \(P(F > F_{obs})\)

Example

| Source | SS | DF | MS |

|---|---|---|---|

| Between groups | 258 | ? | ? |

| Within groups | ? | 26 | ? |

| Total | 898 | 29 |

- Complete the table.

- How many groups were there in the study?

- How many total observations were there in the study?

Example

| Source | SS | DF | MS |

|---|---|---|---|

| Between groups | 258 | ? | ? |

| Within groups | ? | 26 | ? |

| Total | 898 | 29 |

SSW = 898-258=640

df(B) = 29-26=3

MSB = 258/3 = 86

MSW = 640/26 = 24.61

df(B) = k-1 = 3 Thus, 4 groups

df(W) = n-k = 26

df(T) = n-1 = 29 Thus, 30 observations

Example

| Source | SS | DF | MS |

|---|---|---|---|

| Between groups | ? | 3 | 45 |

| Within groups | 337 | 12 | ? |

| Total | 472 | ? |

- Complete the table.

- How many groups were there in the study?

- How many total observations were there in the study?

Example

| Source | SS | DF | MS |

|---|---|---|---|

| Between groups | ? | 3 | 45 |

| Within groups | 337 | 12 | ? |

| Total | 472 | ? |

SSB = 472-337=135

df(T) = 3+12=15

MSB = 135/3 = 45

MSW = 337/12 = 28.08

df(B) = k-1 = 3 Thus, 4 groups

df(W) = n-k = 12

df(T) = n-1 = 15 Thus, 16 observations